Carnegie Mellon computer searches web 24/7 to analyze images and teach itself common sense. (Credit: Carnegie Mellon University) A computer program called the Never Ending Image Learner (NEIL) is now running 24 hours a day at Carnegie Mellon University, searching the Web for images, doing its best to understand them.

And as it builds a growing visual database, it is gathering common sense on a massive scale. NEIL leverages recent advances in computer vision that enable computer programs to identify and label objects in images, to characterize scenes and to recognize attributes, such as colors, lighting and materials, all with a minimum of human supervision.

In turn, the data it generates will further enhance the ability of computers to understand the visual world. But NEIL also makes associations between these things to obtain common sense information: cars often are found on roads, buildings tend to be vertical, and ducks look sort of like geese. Microsoft Research demos Project Adam machine-learning object-recognition software. “Cortana, what breed is this?”

(credit: Microsoft Research) Microsoft Research introduced “Project Adam” AI machine-learning object recognition software at its 2014 Microsoft Research Faculty Summit. The goal of Project Adam is to enable software to visually recognize any object — an ambitious project, given the immense neural network in human brains that makes those kinds of associations possible through trillions of connections. Could Google Glass Track Your Emotional Response to Ads? The head-mounted device, presumably Google Glass, would communicate with a server, relaying information about pupil dilation captured by eye-tracking cameras.

The system could store "an emotional state indication associated with one or more of the identified items" in an external scene, according to the patent. Though the patent specifies that "personal identifying data may be removed from the data and provided to the advertisers as anonymous analytics" in an opt-out system, the idea is to charge advertisers--using a new pay-per-gaze metric--when users view ads online, on billboards, magazines, newspapers, and other types of media.

"Thus, the gaze tracking system described herein offers a mechanism to track and bill offline advertisements in the manner similar to popular online advertisement schemes," the patent states. The fees could scale depending on the duration viewed as well as the inferred emotional state. View the patent below:

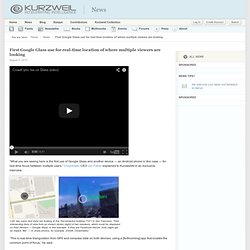

Reconnaissance d'individu. First Google Glass use for real-time location of where multiple viewers are looking. “What you are seeing here is the first use of Google Glass and another device — an Android phone in this case — for real-time focus between multiple users,” CrowdOptic CEO Jon Fisher explained to KurzweilAI in an exclusive interview.

Left: two users (red dots) are looking at the Transamerica building (“22″) in San Francisco. This Robo-Nose Can Smell Better Than You. Une nouvelle caméra numérique qui fait de l'œil aux libellules. Joggobot: The Flying Quadrocopter Jogging Companion. June 5th, 2012 by Range It’s not always easy staying motivated when you train alone.

This is one of the reasons why I find the Joggobot interesting. It’s kind of like a robotic coach, that follows you around as you run. Based on the AR.Drone, the hovering Joggobot quadrotor will attract stares and looks from fellow runners, but it’s certainly easier to keep pace if your smartphone or GPS isn’t enough to keep you motivated. Joggobot : Un AR.Drone qui vous tient compagnie pendant que vous faites du jogging.

10 Sensor Innovations Driving the Digital Health Revolution. Google Glass Advances with Superimposed Controls & More. Google's Patent Background Wearable systems can integrate various elements, such as miniaturized computers, input devices, sensors, detectors, image displays, wireless communication devices as well as image and audio processors, into a device that can be worn by a user. Such devices provide a mobile and lightweight solution to communicating, computing and interacting with one's environment. With the advance of technologies associated with wearable systems and miniaturized optical elements, it has become possible to consider wearable compact optical displays that augment the wearer's experience of the real world.

By placing an image display element close to the wearer's eye(s), an artificial image can be made to overlay the wearer's view of the real world. AOptix Lands DoD Contract To Turn Smartphones Into Biometric Data-Gathering Tools. Smartphones may be invading pockets and purses across the world, but AOptix may soon bring those mobile devices to some far-flung war zones.

The Campbell, Calif. -based company announced earlier today that it (along with government-centric IT partner CACI) nabbed a $3 million research contract from the U.S. Department of Defense to bring its “Smart Mobile Identity” concept to fruition. The company kept coy about what that actually means in its release, but Wired has the full story — the big goal is o create an accessory of sorts capable of attaching to a commercially-available smartphone that can capture high-quality biometric data— think a subject’s thumb prints, face/eye scans, and voice recordings. At first glance, it really doesn’t sound like that tall an order — smartphones are substantially more powerful than they were just a few years ago, and that’s the sort of trend that isn’t going to be bucked anytime soon.

专利之家-设计发明与创意商机 » 安全结实的伪飞艇飞机. Micro Drones Now Buzzing Around Afghanistan. British soldiers are testing out tiny 4×1-inch mini surveillance drones throughout Afghanistan.

“We used it to look for insurgent firing points and check out exposed areas of the ground before crossing, which is a real asset,” said Sgt. Christopher Petherbridge. The toy-looking Black Hornet Nano can fly for up to 30 minutes with a top speed of 22 mph, or a range of about one half-mile. U.K. Leap Motion Augmented Reality Demo. Ce système de surveillance DVR HD de Samsung enregistre des vidéos de sécurité en haute définition. Par Nicolas Martin on 8/01/2013 [CES 2013] Chaque fois que vous pensez à de la vidéo de sécurité, la première chose qui vient l’esprit est sans doute de la vidéo granuleuse, en noir et blanc, et en basse résolution.

Cependant, il semble que Samsung souhaite changer les choses. PanaCast, la caméra offre le streaming HD avec un champ de vision de 200 degrés. PanaCast d’Altia est un prototype qui pourrait changer définitivement le marché de la conférence vidéo HD.

Bien que nos écrans utilisent généralement les formats 16:9 ou 4:3, le champ de vision humain est beaucoup large que ça (en particulier, si vous comptez la vision périphérique), alors l’idée d’une conférence vidéo avec un écran ultra large n’est pas nouvelle. Le système a été conçu pour donner l’impression que l’autre partie « est là », mais il coûte des dizaines de milliers, voire des centaines de milliers de dollars. PanaCast est un projet kickstarter qui veut changer le jeu de plusieurs magnitudes. Sony : Un "Kinect" basé sur le son - 27/12/2012. Microsoft Invents Smart Walls for Next-Gen Homes & Offices. Microsoft has been working with large scale multi-touch displays for some time now.

Their PixelSense projects, once under the branding of Surface, involve large scale interactive tables for the home and office. According to a new patent application that we recently discovered, it now appears that Microsoft has their eye on being the first to bring touch and haptics technology to future smart homes in the form of smart walls. Ce cerveau interactif va répondre à toutes vos questions ! Nous nous doutons que vous ne vous posez pas tous les jours cette question, mais vous êtes-vous déjà demandé quelle est la partie de votre cerveau qui s'active quand vous pensez à telle ou telle chose ?

Cette petite application en ligne est une véritable mine d’informations. Samsung Invents Air-Gesturing Controls for Tablets & Beyond. A recently published Samsung patent application has revealed a new air-gesturing invention that could supplement or eliminate the need to actually touch a display in order to control its functionality. When using Samsung's air-gesturing techniques in conjunction with a Samsung HDTV, for example, the idea is to simply eliminate the need for a physical remote controller. In the case with tablet computers, the user will have the option of turning on air-gesture functionality full time or for specific applications that the user assigns them to.

Samsung's air-gesturing capabilities are made possible by utilizing a specialized camera or multiple cameras that incorporate ultrasonic signals and/or specialized motion sensors. The signals and/or sensors are able to create a virtual screen area well above the surface of the tablet display as clearly illustrated in our cover graphic. Why Apple Might Have a Hard Time Keeping Up With Google Maps. FBI launches $1 billion face recognition project - tech - 07 September 2012. The Next Generation Identification programme will include a nationwide database of criminal faces and other biometrics "FACE recognition is 'now'," declared Alessandro Acquisti of Carnegie Mellon University in Pittsburgh in a testimony before the US Senate in July.

It certainly seems that way. As part of an update to the national fingerprint database, the FBI has begun rolling out facial recognition to identify criminals. La frappe au clavier, un outil biométrique prometteur. Emploi : Tape sur ton clavier, je te dirais qui tu es. Throwable Panoramic Ball Camera // Jonas Pfeil. Face.com Brings Facial Recognition to the Masses, Now with Age Detection: Interview With CEO.

Face.com's API now returns an age estimation for faces it detects in photos - seen here with some recognizable examples. Looking at someone’s face can tell you a lot about who they are. New Surveillance System Identifies Your Face By Searching Through 36 Million Images Per Second. When it comes to surveillance, your face may now be your biggest liability. Privacy advocates, brace yourselves – the search capabilities of the latest surveillance technology is nightmare fuel. Hitachi Kokusai Electric recently demonstrated the development of a surveillance camera system capable of searching through 36 million images per second to match a person’s face taken from a mobile phone or captured by surveillance.

While the minimum resolution required for a match is 40 x 40 pixels, the facial recognition software allows a variance in the position of the person’s head, such that someone can be turned away from the camera horizontally or vertically by 30 degrees and it can still make a match. Furthermore, the software identifies faces in surveillance video as it is recorded, meaning that users can immediately watch before and after recorded footage from the timepoint. The power of the search capabilities is in the algorithms that group similar faces together. Face.com : une API qui détecte l’âge. Face.com, qui développe des logiciels spécialisés dans la reconnaissance faciale, vient d’améliorer son API et ajoute à la reconnaissance d’un visage, son âge approximatif.

C’est terminé, vous ne pourrez plus tromper personne sur votre âge ! This Futuristic Camera Can See Around Corners Using Lasers. Got Lazy Fingers? Now You Can Play Pong With Your Eyes. July 20th, 2012 by Hazel Chua. You Can Crash This RC Helicopter as Many Times as You Want.

Watch This: POV Aerial Shots Taken with $12k Copter. MIT robot plane deletes the pilot. When the robots come for you, at least they won't scratch the walls. MIT research into autonomous flight has delivered a robotic plane that can thread its way, at speed, through enclosed and indoor conditions, without requiring preconfigured flight plans or GPS navigation. The plane has significantly longer flight time than autonomous helicopters, though introduced a fair few problems of its own. Unlike helicopters, which can hover, rotate on the spot, easily travel in three-dimensions and go sideways, planes must keep moving and have reduced flexibility in where they can redirect themselves. MIT's solution was a custom-designed aircraft with shorter, chunkier wings that combine tight turning, the possibility of relatively low speeds without stalling, and reasonable cargo capabilities for the AI smarts and camera equipment.

Flying Robotic Swarm of Nano Quadrotors Gets Millions of Views, New Company. These acrobatic robots can launch themselves through rings, duck and weave around obstacles, and even fly through your bedroom window. Festo BionicOpter Robot Dragonfly Makes Quadcopters Look Clumsy. Seed drone Samarai swarms will dominate the skies [Video] Les quadricoptères à la rescousse des policiers. Les micro-drônes gagnent en réactivité grâce au MIT. LA100 : un drone capable de prendre automatiquement des photos d’en-haut.

Reconnaissance du comportement.