Distill — Latest articles about machine learning. Dlib C++ Library - Machine Learning. Data Mining - Entropy (Information Gain) [Gerardnico] Entropy is a function “Information” that satisfies: where: p1p2 is the probability of event 1 and event 2 p1 is the probability of an event 1 p1 is the probability of an event 2 Mathematics - Logarithm Function (log) H stands for entropy E for Ensemble ???

![Data Mining - Entropy (Information Gain) [Gerardnico]](http://cdn.pearltrees.com/s/pic/th/entropy-information-gerardnico-172281421)

Sinapsis. Recurrent Neural Networks Tutorial, Part 1 – Introduction to RNNs. Recurrent Neural Networks (RNNs) are popular models that have shown great promise in many NLP tasks.

But despite their recent popularity I’ve only found a limited number of resources that throughly explain how RNNs work, and how to implement them. That’s what this tutorial is about. Neural networks and deep learning. Home. Using Artificial Intelligence to Augment Human Intelligence. Deep Learning. Recurrent Neural Networks Tutorial, Part 1 – Introduction to RNNs. A Beginner's Guide to Recurrent Networks and LSTMs. Contents The purpose of this post is to give students of neural networks an intuition about the functioning of recurrent networks and purpose and structure of a prominent variation, LSTMs.

Recurrent nets are a type of artificial neural network designed to recognize patterns in sequences of data, such as text, genomes, handwriting, the spoken word, or numerical times series data emanating from sensors, stock markets and government agencies. They are arguably the most powerful type of neural network, applicable even to images, which can be decomposed into a series of patches and treated as a sequence. Since recurrent networks possess a certain type of memory, and memory is also part of the human condition, we’ll make repeated analogies to memory in the brain.1 Review of Feedforward Networks To understand recurrent nets, first you have to understand the basics of feedforward nets.

Essentials of Deep Learning : Introduction to Long Short Term Memory. Applied Probability. AI memories — expert systems. December 3, 2015 This is part of a four post series spanning two blogs.

As I mentioned in my quick AI history overview, I was pretty involved with AI vendors in the 1980s. Here on some notes on what was going on then, specifically in what seemed to be the hottest area at the time — expert systems. Summing up: The expert systems business never grew to be very large, but it garnered undue attention (including from me). First, some basics. The core expert system metaphor was: Facts in.A question (often implicit) in.A recommended decision out.The essential technology of expert systems was what we’d now call a “rules engine”, but then was often called an “expert systems shell” or “inference engine”.The development process consisted of “knowledge engineers” talking to human experts and coming up with rules.Any product could handle 10s of rules. Anyhow: All combined, the expert system vendors didn’t accomplish much.

Pat Langley. Pat Langley. Online machine learning. Online machine learning is used in the case where the data becomes available in a sequential fashion, in order to determine a mapping from the dataset to the corresponding labels.

The key difference between online learning and batch learning (or "offline" learning) techniques, is that in online learning the mapping is updated after the arrival of every new datapoint in a scalable fashion, whereas batch techniques are used when one has access to the entire training dataset at once. Online learning could be used in the case of a process occurring in time, for example the value of a stock given its history and other external factors, in which case the mapping updates as time goes on and we get more and more samples. Ideally in online learning, the memory needed to store the function remains constant even with added datapoints, since the solution computed at one step is updated when a new datapoint becomes available, after which that datapoint can then be discarded. , where on . , such that . Dimensionality reduction. In machine learning and statistics, dimensionality reduction or dimension reduction is the process of reducing the number of random variables under consideration,[1] and can be divided into feature selection and feature extraction.[2] Feature selection[edit] Feature extraction[edit] The main linear technique for dimensionality reduction, principal component analysis, performs a linear mapping of the data to a lower-dimensional space in such a way that the variance of the data in the low-dimensional representation is maximized.

In practice, the correlation matrix of the data is constructed and the eigenvectors on this matrix are computed. History of the Perceptron. History of the Perceptron The evolution of the artificial neuron has progressed through several stages.

The roots of which, are firmly grounded within neurological work done primarily by Santiago Ramon y Cajal and Sir Charles Scott Sherrington . Ramon y Cajal was a prominent figure in the exploration of the structure of nervous tissue and showed that, despite their ability to communicate with each other, neurons were physically separated from other neurons. With a greater understanding of the basic elements of the brain, efforts were made to describe how these basic neurons could result in overt behaviors, to which William James was a prominent theoretical contributor. Working from the beginnings of neuroscience, Warren McCulloch and Walter Pitts in their 1943 paper, "A Logical Calculus of Ideas Immanent in Nervous Activity," contended that neurons with a binary threshold activation function were analogous to first order logic sentences.

The activation function then becomes: Recurrent neural network. A recurrent neural network (RNN) is a class of neural network where connections between units form a directed cycle.

This creates an internal state of the network which allows it to exhibit dynamic temporal behavior. Unlike feedforward neural networks, RNNs can use their internal memory to process arbitrary sequences of inputs. This makes them applicable to tasks such as unsegmented connected handwriting recognition, where they have achieved the best known results.[1] Artificial neural network. An artificial neural network is an interconnected group of nodes, akin to the vast network of neurons in a brain.

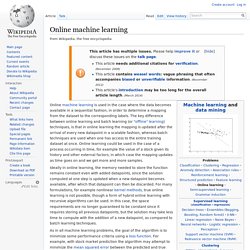

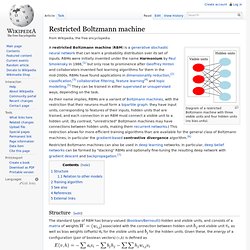

Here, each circular node represents an artificial neuron and an arrow represents a connection from the output of one neuron to the input of another. For example, a neural network for handwriting recognition is defined by a set of input neurons which may be activated by the pixels of an input image. After being weighted and transformed by a function (determined by the network's designer), the activations of these neurons are then passed on to other neurons. This process is repeated until finally, an output neuron is activated. This determines which character was read. Restricted Boltzmann machine. Diagram of a restricted Boltzmann machine with three visible units and four hidden units (no bias units).

A restricted Boltzmann machine (RBM) is a generative stochastic neural network that can learn a probability distribution over its set of inputs. RBMs were initially invented under the name Harmonium by Paul Smolensky in 1986,[1] but only rose to prominence after Geoffrey Hinton and collaborators invented fast learning algorithms for them in the mid-2000s. RBMs have found applications in dimensionality reduction,[2] classification,[3] collaborative filtering, feature learning[4] and topic modelling.[5] They can be trained in either supervised or unsupervised ways, depending on the task.

Restricted Boltzmann machines can also be used in deep learning networks. In particular, deep belief networks can be formed by "stacking" RBMs and optionally fine-tuning the resulting deep network with gradient descent and backpropagation.[7] Structure[edit] and visible unit for the visible units and. Multilayer perceptron. Self-organizing map. A self-organizing map (SOM) or self-organizing feature map (SOFM) is a type of artificial neural network (ANN) that is trained using unsupervised learning to produce a low-dimensional (typically two-dimensional), discretized representation of the input space of the training samples, called a map.

Self-organizing maps are different from other artificial neural networks in the sense that they use a neighborhood function to preserve the topological properties of the input space. This makes SOMs useful for visualizing low-dimensional views of high-dimensional data, akin to multidimensional scaling. The model was first described as an artificial neural network by the Finnish professor Teuvo Kohonen, and is sometimes called a Kohonen map or network.[1][2] Like most artificial neural networks, SOMs operate in two modes: training and mapping.

A self-organizing map consists of components called nodes or neurons. Large SOMs display emergent properties. Protein Secondary Structure Prediction with Neural Nets: Feed-Forward Networks. Introduction to feed-forward nets Feed-forward nets are the most well-known and widely-used class of neural network. The popularity of feed-forward networks derives from the fact that they have been applied successfully to a wide range of information processing tasks in such diverse fields as speech recognition, financial prediction, image compression, medical diagnosis and protein structure prediction; new applications are being discovered all the time. (For a useful survey of practical applications for feed-forward networks, see [Lisboa, 1992].)

In common with all neural networks, feed-forward networks are trained, rather than programmed, to carry out the chosen information processing tasks. Autoencoder. An autoencoder, autoassociator or Diabolo network[1]:19 is an artificial neural network used for learning efficient codings.[2] The aim of an auto-encoder is to learn a compressed, distributed representation (encoding) for a set of data, typically for the purpose of dimensionality reduction.

Overview[edit] Architecturally, the simplest form of the autoencoder is a feedforward, non-recurrent neural net that is very similar to the multilayer perceptron (MLP), with an input layer, an output layer and one or more hidden layers connecting them. The difference with the MLP is that in an autoencoder, the output layer has equally many nodes as the input layer, and instead of training it to predict some target value y given inputs x, an autoencoder is trained to reconstruct its own inputs x. I.e., the training algorithm can be summarized as For each input x, Do a feed-forward pass to compute activations at all hidden layers, then at the output layer to obtain an output x̂ Feedforward neural network. Perceptron. Een perceptron (of meerlaags perceptron) is een neuraal netwerk waarin de neuronen in verschillende lagen met elkaar verbonden zijn.

Een eerste laag bestaat uit ingangsneuronen, waar de inputsignalen aangelegd worden. Vervolgens zijn er één of meerdere 'verborgen’ lagen, die zorgen voor meer 'intelligentie' en ten slotte is er de uitgangslaag, die het resultaat van het perceptron weergeeft. Alle neuronen van een bepaalde laag zijn verbonden met alle neuronen van de volgende laag, zodat het ingangssignaal voort propageert door de verschillende lagen heen. Feature learning. Deep learning. Connectionism. Connectionism is a set of approaches in the fields of artificial intelligence, cognitive psychology, cognitive science, neuroscience, and philosophy of mind, that models mental or behavioral phenomena as the emergent processes of interconnected networks of simple units.

There are many forms of connectionism, but the most common forms use neural network models. Machine Learning Project at the University of Waikato in New Zealand. Support vector machine. In machine learning, support vector machines (SVMs, also support vector networks[1]) are supervised learning models with associated learning algorithms that analyze data and recognize patterns, used for classification and regression analysis. Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples into one category or the other, making it a non-probabilistic binary linear classifier.

Kernel Methods for Pattern Analysis - The Book. Untitled. Mika Rautiainen. Jayan Eledath.