Probabilistic Models of Cognition. Basic Neural Network Tutorial – Theory. Well this tutorial has been a long time coming.

Neural Networks (NNs) are something that i’m interested in and also a technique that gets mentioned a lot in movies and by pseudo-geeks when referring to AI in general. They are made out to be these really intense and complicated systems when in fact they are nothing more than a simple input output machine (well at least for the standard Feed Forward Neural Networks (FFNN) ). As with any field the more you delve into it the more technical it gets and NNs are the same, the more research you do into them the more complicated architectures, training techniques, activation functions become.

For now this is just a simple primer into NNs. Introduction to Neural Networks There are many different types of neural networks and techniques for training them but I’m just going to focus on the most basic one of them all – the classic back propagation neural network (BPN). This BPN uses the gradient descent learning method. , . The Neuron Simple, huh? Backpropagation. The project describes teaching process of multi-layer neural network employing backpropagation algorithm.

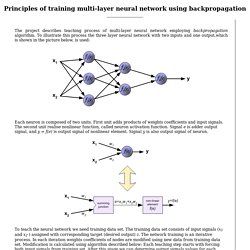

To illustrate this process the three layer neural network with two inputs and one output,which is shown in the picture below, is used: Each neuron is composed of two units. First unit adds products of weights coefficients and input signals. The second unit realise nonlinear function, called neuron activation function. Signal e is adder output signal, and y = f(e) is output signal of nonlinear element.

To teach the neural network we need training data set. Propagation of signals through the hidden layer. Propagation of signals through the output layer. Introduction to Neural Networks. Instructors: Nici Schraudolph and Fred CumminsIstituto Dalle Molle di Studi sull'Intelligenza Artificiale Lugano, CH [Content][Organization][Contact][Links] Course content Summary Our goal is to introduce students to a powerful class of model, the Neural Network.

In fact, this is a broad term which includes many diverse models and approaches. We then introduce one kind of network in detail: the feedforward network trained by backpropagation of error. Lecture 1: Introduction Lecture 2: The Backprop Toolbox By popular demand: Lectures 1 & 2 as a ZIP file. Lecture 3: Advanced Topics. Your Courses. Data Frame Row Slice.

We retrieve rows from a data frame with the single square bracket operator, just like what we did with columns.

However, in additional to an index vector of row positions, we append an extra comma character. This is important, as the extra comma signals a wildcard match for the second coordinate for column positions. Numeric Indexing For example, the following retrieves a row record of the built-in data set mtcars. Please notice the extra comma in the square bracket operator, and it is not a typo. Online Learning - RStudio. The R Project for Statistical Computing. Tutorials - emergent. Computational Cognitive Neuroscience Wiki. Find Courses by Topic. Web.stanford.edu/group/pdplab/pdphandbook/ Teaching Cognitive Modeling Using PDP++ — Brains, Minds & Media. 2.2 Supported Platforms, Access, & Installation Originally, PDP++ was developed for use on UNIX [3] machines.

Since its initial release, PDP++ has been ported to a number of other operating systems, though it still retains traces of its roots. In particular, support on a variety of Microsoft Windows [4] platforms (including 95, 98, 2000, NT, and XP) is provided through the use of the Cygwin [5] package. CYGWIN provides some aspects of UNIX-like functionality within a Windows environment, allowing PDP++ to run on Windows machines without requiring an excessive amount of platform-specific code. Similarly, the Darwin project for Mac OS X [6] provides sufficient UNIX-like functionality to allow PDP++ to run under Max OS X Versions 10.3 or 10.4 (Tiger). It is worth noting that PDP++ provides support for symmetric multiprocessing (SMP), allowing the software to leverage parallel processors when such hardware is available.

The PDP++ software package may be freely downloaded from the internet. Emergent. Comparison of Neural Network Simulators - emergent. Emergent. PDP Software. DAGitty - drawing and analyzing causal diagrams (DAGs) Tutorial. Tutorial Contents Introduction Specifying a model in the BUGS language Running a model in WinBUGS Monitoring parameter values Checking convergence How many iterations after convergence?

Obtaining summaries of the posterior distributionIntroduction [top] This tutorial is designed to provide new users with a step-by-step guide to running an analysis in WinBUGS. It is not intended to be prescriptive, but rather to introduce you to the main tools needed to run an MCMC simulation in WinBUGS, and give some guidance on appropriate usage of the software.

The Seeds example from Volume I of the WinBUGS examples will be used throughout this tutorial. This example is taken from Table 3 of Crowder (1978) and concerns the proportion of seeds that germinated on each of 21 plates arranged according to a 2 by 2 factorial layout by seed and type of root extract. The data are shown below, where r i and n i are the number of germinated and the total number of seeds on the i th plate, i = 1, ..., N.