Dev:treetaggerwrapper [LPointal] See TreeTagger Python Wrapper on SourceSup where you can download latest version of treetaggerwrapper.py from the subversion repository. Dont miss to download and install TreeTagger itself, as the module presented here is really just a wrapper to call Helmut Schmid nice tool from within Python.

Once installed TreeTagger, you can setup a TAGDIR environment variable to indicate directory of installation. It is used by the wrapper to locate TreeTagger binary and its libraries – else you must give this location in all of your scripts at your wrapper object creation (and this make your own scripts dependant on user's location of treetagger). There is a documentation at the beginning of the module, its a good point to start reading this doc before using the module. Treetagger-python/treetagger.py at master · miotto/treetagger-python. Advanced Python libs.

Python Regex Tool. Club des développeurs Python : actualités, cours, tutoriels, faq, sources, forum. Pattern. Pattern is a web mining module for the Python programming language.

It has tools for data mining (Google, Twitter and Wikipedia API, a web crawler, a HTML DOM parser), natural language processing (part-of-speech taggers, n-gram search, sentiment analysis, WordNet), machine learning (vector space model, clustering, SVM), network analysis and <canvas> visualization. The module is free, well-document and bundled with 50+ examples and 350+ unit tests. Download Installation Pattern is written for Python 2.5+ (no support for Python 3 yet). To install Pattern so that the module is available in all Python scripts, from the command line do: > cd pattern-2.6 > python setup.py install If you have pip, you can automatically download and install from the PyPi repository: If none of the above works, you can make Python aware of the module in three ways: Quick overview.

Python Script - Plugin for Notepad++ Mechanize. Stateful programmatic web browsing in Python, after Andy Lester’s Perl module WWW::Mechanize.

The examples below are written for a website that does not exist (example.com), so cannot be run. There are also some working examples that you can run. import reimport mechanize. PycURL Home Page. The igraph library for complex network research. June 24, 2015 Release Notes This is a new major release, with a lot of UI changes.

We tried to make it easier to use, with short and easy to remember, consistent function names. Unfortunately this also means that many functions have new names now, but don't worry, all the old names still work. Apart from the new names, the biggest change in this release is that most functions that used to return numeric vertex or edge ids, return vertex/edge sequences now. We will update the documentation on this site, once the package is on CRAN and available for all architectures. More → January 16, 2015 A couple of days ago we changed how we use GitHub for igraph development. Main igraph repositories now: April 21, 2014 Some bug fixes, to make sure that the code included in 'Statistical Analysis of Network Data with R' works.

Detailed changes: Some bug fixes, to make sure that the code included in 'Statistical Analysis of Network Data with R' works. February 4, 2014 igraph @ github. Matplotlib: python plotting — Matplotlib 1.2.0 documentation. Lxml - Processing XML and HTML with Python. Category:LanguageBindings -> PySide. EnglishEspañolMagyarItalian한국어日本語 Welcome to the PySide documentation wiki page.

The PySide project provides LGPL-licensed Python bindings for the Qt. It also includes complete toolchain for rapidly generating bindings for any Qt-based C++ class hierarchies. PySide Qt bindings allow both free open source and proprietary software development and ultimately aim to support Qt platforms. The latest version of PySide is 1.2.1 released on August 16, 2013 and provides access to the complete Qt 4.8 framework.

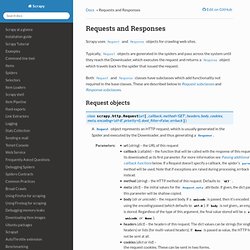

This Wiki is a community area where you can easily contribute, and which may contain rapidly changing information. [[Category:LanguageBindings::PySide]] Also, since PySide is sharing the wiki with other Qt users, include the word “PySide” or “Python” in the names of any new pages you create to differentiate it from other Qt pages.. FMiner - visual web scraping ($$) An open source web scraping framework for Python. Requests and Responses — Scrapy 0.14.4 documentation. Using FormRequest to send data via HTTP POST¶ If you want to simulate a HTML Form POST in your spider and send a couple of key-value fields, you can return a FormRequest object (from your spider) like this:

Python - Scraping forums with scrapy. Weblogs Forum - Screen Scraping With Python. Summary Web-enabling an old terminal-oriented application turns into more fun than expected.

A blow-by-blow account of writing a screen scraper with Python and pexpect. I recently finished a project for a local freight broker. They run their business on an old SCO Unix-based "green screen" terminal application. They wanted to enable some functionality on their web site, so customers could track their shipments, and carriers could update their location and status. By the time the project got to me the client had almost given up on finding a solution. How Did You Learn To Web Scrape Using Python?