Plot dynamic network time series response - MATLAB plotresponse. Feed forward (control) Feed-forward is a term describing an element or pathway within a control system which passes a controlling signal from a source in its external environment, often a command signal from an external operator, to a load elsewhere in its external environment.

A control system which has only feed-forward behavior responds to its control signal in a pre-defined way without responding to how the load reacts; it is in contrast with a system that also has feedback, which adjusts the output to take account of how it affects the load, and how the load itself may vary unpredictably; the load is considered to belong to the external environment of the system. In a feed-forward system, the control variable adjustment is not error-based. Instead it is based on knowledge about the process in the form of a mathematical model of the process and knowledge about or measurements of the process disturbances.[1] These systems could relate to control theory, physiology or computing.

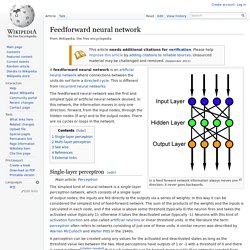

Open-loop controller S. Feedforward neural network. In a feed forward network information always moves one direction; it never goes backwards.

递归神经网络. 递归神经网络(RNN)是两种人工神经网络的总称。

一种是时间递归神经网络(recurrent neural network),另一种是结构递归神经网络(recursive neural network)。 时间递归神经网络的神经元间连接构成有向图,而结构递归神经网络利用相似的神经网络结构递归构造更为复杂的深度网络。 时间递归神经网络可以描述动态时间行为,因为和前馈神经网络(feedforward neural network)接受较特定结构的输入不同,RNN将状态在自身网络中循环传递,因此可以接受更广泛的时间序列结构输入。 手写识别是最早成功利用RNN的研究结果。 前馈神经网络. 在人工神经网络中,前馈神经网络(Feedforward Neural Network)是最早发明,最简单的人工神经网络类型。

在它内部,参数从输入层向输出层单向传播。 卷积神经网络. 卷积神经网络(Convolutional Neural Network, CNN)是一种前馈神经网络,它的人工神经元可以响应一部分覆盖范围内的周围单元,[1]对于大型图像处理有出色表现。

天气预测应用彩云天气使用卷积神经网络算法,可以精确预测市区一条街道十分钟至一小时内的降雨量。 卷积神经网络由一个或多个卷积层和顶端的全连通层(对应经典的神经网络)组成,同时也包括关联权重和池化层(pooling layer)。 这一结构使得卷积神经网络能够利用输入数据的二维结构。 与其他深度学习结构相比,卷积神经网络在图像和语音识别方面能够给出更优的结果。 这一模型也可以使用反向传播算法进行训练。 概览[编辑] Convolutional neural network - Google 搜尋. 【面向代码】学习 Deep Learning(三)Convolution Neural Network(CNN) - DarkScope从这里开始. 最近一直在看Deep Learning,各类博客、论文看得不少 但是说实话,这样做有些疏于实现,一来呢自己的电脑也不是很好,二来呢我目前也没能力自己去写一个toolbox 只是跟着Andrew Ng的UFLDL tutorial 写了些已有框架的代码(这部分的代码见github) 后来发现了一个matlab的Deep Learning的toolbox,发现其代码很简单,感觉比较适合用来学习算法 再一个就是matlab的实现可以省略掉很多数据结构的代码,使算法思路非常清晰 所以我想在解读这个toolbox的代码的同时来巩固自己学到的,同时也为下一步的实践打好基础 (本文只是从代码的角度解读算法,具体的算法理论步骤还是需要去看paper的 我会在文中给出一些相关的paper的名字,本文旨在梳理一下算法过程,不会深究算法原理和公式) 使用的代码:DeepLearnToolbox ,下载地址:点击打开,感谢该toolbox的作者 今天是CNN的内容啦,CNN讲起来有些纠结,你可以事先看看convolution和pooling(subsampling),还有这篇:tornadomeet的博文 下面是那张经典的图: 打开\tests\test_example_CNN.m一观 cnn.layers = { struct('type', 'i') %input layer struct('type', 'c', 'outputmaps', 6, 'kernelsize', 5) %convolution layer struct('type', 's', 'scale', 2) %sub sampling layer struct('type', 'c', 'outputmaps', 12, 'kernelsize', 5) %convolution layer struct('type', 's', 'scale', 2) %subsampling layer }; cnn = cnnsetup(cnn, train_x, train_y); opts.alpha = 1; opts.batchsize = 50; opts.numepochs = 1; cnn = cnntrain(cnn, train_x, train_y, opts); 主要是一些参数的作用的解释,详细的参看代码里的注释啊.

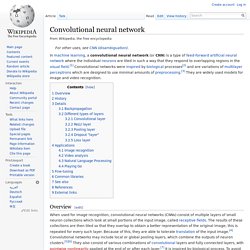

Convolutional neural network. In machine learning, a convolutional neural network (or CNN) is a type of feed-forward artificial neural network where the individual neurons are tiled in such a way that they respond to overlapping regions in the visual field.[1] Convolutional networks were inspired by biological processes[2] and are variations of multilayer perceptrons which are designed to use minimal amounts of preprocessing.[3] They are widely used models for image and video recognition.

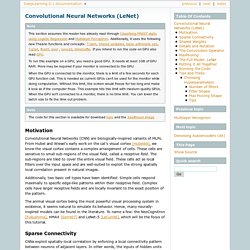

Overview[edit] Some Time delay neural networks also use a very similar architecture to convolutional neural networks, especially those for image recognition and/or classification tasks, since the "tiling" of the neuron outputs can easily be carried out in timed stages in a manner useful for analysis of images.[8] Compared to other image classification algorithms, convolutional neural networks use relatively little pre-processing. History[edit] Convolutional Neural Networks (LeNet) — DeepLearning 0.1 documentation. Note This section assumes the reader has already read through Classifying MNIST digits using Logistic Regression and Multilayer Perceptron.

Additionally, it uses the following new Theano functions and concepts: T.tanh, shared variables, basic arithmetic ops, T.grad, floatX, pool , conv2d, dimshuffle. If you intend to run the code on GPU also read GPU. To run this example on a GPU, you need a good GPU. It needs at least 1GB of GPU RAM. When the GPU is connected to the monitor, there is a limit of a few seconds for each GPU function call. Motivation Convolutional Neural Networks (CNN) are biologically-inspired variants of MLPs. Additionally, two basic cell types have been identified: Simple cells respond maximally to specific edge-like patterns within their receptive field. The animal visual cortex being the most powerful visual processing system in existence, it seems natural to emulate its behavior. Sparse Connectivity Imagine that layer m-1 is the input retina. Shared Weights and bias . And. Convolutional Neural Networks.