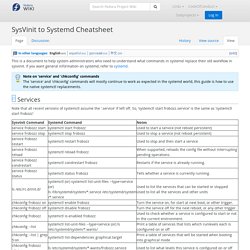

SysVinit to Systemd Cheatsheet. This is a document to help system administrators who need to understand what commands in systemd replace their old workflow in sysvinit.

If you want general information on systemd, refer to systemd. Note on 'service' and 'chkconfig' commands The 'service' and 'chkconfig' commands will mostly continue to work as expected in the systemd world, this guide is how to use the native systemctl replacements. 🔗 Services Note that all recent versions of systemctl assume the '.service' if left off. Concepts. Edit This Page The Concepts section helps you learn about the parts of the Kubernetes system and the abstractions Kubernetes uses to represent your clusterA set of worker machines, called nodes, that run containerized applications.

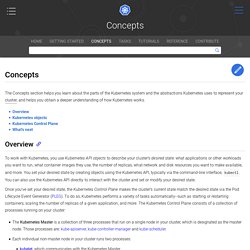

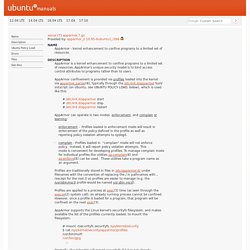

Every cluster has at least one worker node., and helps you obtain a deeper understanding of how Kubernetes works. Overview. Envoy Proxy - Home. AppArmor - kernel enhancement to confine programs to a limited set of. Ubuntu manuals xenial (7) apparmor.7.gz Provided by: apparmor_2.10.95-0ubuntu1_i386 AppArmor - kernel enhancement to confine programs to a limited set of resources.

AppArmor is a kernel enhancement to confine programs to a limited set of resources. AppArmor's unique security model is to bind access control attributes to programs rather than to users. AppArmor confinement is provided via profiles loaded into the kernel via apparmor_parser(8), typically through the /etc/init.d/apparmor SysV initscript (on Ubuntu, see UBUNTU POLICY LOAD, below), which is used like this: # /etc/init.d/apparmor start # /etc/init.d/apparmor stop # /etc/init.d/apparmor restart AppArmor can operate in two modes: enforcement, and complain or learning: · enforcement - Profiles loaded in enforcement mode will result in enforcement of the policy defined in the profile as well as reporting policy violation attempts to syslogd. · complain - Profiles loaded in "complain" mode will not enforce policy.

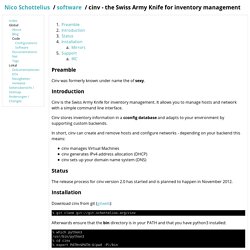

Cinv - the Swiss Army Knife for inventory management. Preamble Cinv was formerly known under name the of sexy.

Introduction. Launchpad for Amazon Web Services. The Gold Standard for modern cloud-native applications is a serverless architecture.

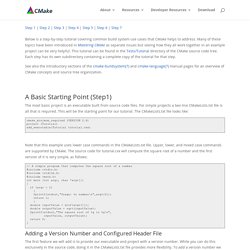

AWS Lambda allows you to implement scalable and fault tolerant applications without the need of a single virtual machine. A serverless infrastructure based on AWS Lambda has two key benefits: You don’t need to manage a fleet of virtual machines anymore.Deploying new versions of your code can be entirely controlled by API calls. This article shows you how to process asynchronous tasks serverless. Possible use cases are: sending out massive amounts of emails, transcoding video files after upload, or analyzing user behavior. What is SQS? A best practice when building scalable and highly available systems on AWS is to decouple your microservice by using one of the following options: Tutorial. The most basic project is an executable built from source code files.

Kubernetes: from load balancer to pod – Google Cloud Platform — Community. At work we use Kubernetes.

Last Friday I was talking to a colleague and we were wondering how load balancers, services and pods worked all together. Actually, everything is pretty well explained in the Services, Load Balancing, and Networking section from the Kubernetes concepts. However, you probably need a couple of reads to make sense of everything so I really needed to see it for myself and play with an example.

The basic question I was trying to answer was: what happens when you define a service as a load balancer and how do packets end up in my pod? So, let’s start with the example. Reasons Kubernetes is cool. When I first learned about Kubernetes (a year and a half ago?)

I really didn’t understand why I should care about it. I’ve been working full time with Kubernetes for 3 months or so and now have some thoughts about why I think it’s useful. (I’m still very far from being a Kubernetes expert!) Test-Driven Development with Python. Test-Driven Development with Python Test-Driven Development with Python Harry Percival Gillian McGarvey Rebecca Demarest Wendy Catalano Randy Comer David Futato Copyright © 2014 Harry Percival Printed in the United States of America.

1.3. A Quick Tour — Buildbot 0.9.12 documentation. 1.3.1.

Goal This tutorial will expand on the First Run tutorial by taking a quick tour around some of the features of buildbot that are hinted at in the comments in the sample configuration. We will simply change parts of the default configuration and explain the activated features. Continuous Integration - Full Stack Python. Continuous integration automates the building, testing and deploying of applications. Software projects, whether created by a single individual or entire teams, typically use continuous integration as a hub to ensure important steps such as unit testing are automated rather than manual processes. What's in a transport layer?

Microservices are small programs, each with a specific and narrow scope, that are glued together to produce what appears from the outside to be one coherent web application. This architectural style is used in contrast with a traditional "monolith" where every component and sub-routine of the application is bundled into one codebase and not separated by a network boundary.

In recent years microservices have enjoyed increased popularity, concurrent with (but not necessarily requiring the use of) enabling new technologies such as Amazon Web Services and Docker. In this article, we will take a look at the "what" and "why" of microservices and at gRPC, an open source framework released by Google, which is a tool organizations are increasingly reaching for in their migration towards microservices.

Why Use Microservices? To understand the general history and structure of microservices emerging as an architectural pattern, this Martin Fowler article is a good and fairly comprehensive read. The evolution of data center networks. I asked Dinesh Dutt, chief scientist at Cumulus Networks and author of BGP in the Datacenter, to discuss how data centers have changed in recent years, new tools and techniques for network engineers, and what the future may hold for data center networking. Here are some highlights. How have data centers evolved over the past few years? The Microcontainer Manifesto and the Right Tool for the Job. Containers have grown dramatically in popularity over the last few years. They have been used to replace both package managers and config management.

Unfortunately, while the standard build process for containers is ideal for developers, but the resulting container images make operators' jobs more difficult. Related content While building cloud services here at Oracle, we identified a number of services that could benefit from containers, but we ensure their stability and security. In order to be comfortable with using containers in production we had to make some changes to our container build process. Problems Resulting from Docker Build Many of the problems arise from the practice of putting an entire linux into the container image. IT Roadmap 2017. How do you build a container-ready application?