Researchers shut down AI that invented its own language. Hey Facebook visitor!

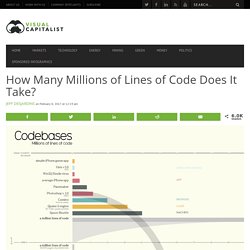

We're seeing a lot of traffic on this article right now. Clearly AI is of interest. When you're done reading this, check out 👉 this article. The observations made at Facebook are the latest in a long line of similar cases. In each instance, an AI being monitored by humans has diverged from its training in English to develop its own language. How Many Millions of Lines of Code Does It Take? Today’s data visualization comes from David McCandless from Information is Beautiful.

Buy their awesome book called Knowledge is Beautiful – we own the physical version, and it’s full of great data visualizations. How many millions of lines of code does it take to make the modern program, web service, car, or airplane possible? MIT's new method of radio transmission could one day make wireless VR a reality - The Verge. In pictures: The incredible history of early modern computing These days we take computers very much for granted, but even the word “computer”, as defined as an electronic device, was only coined in 1945.

Before that, a computer was simply a person who calculates. The last 70 odd years have seen a revolution in computing power beyond the wildest dreams of those early computing pioneers. However, without them none of the devices we use every single day could have been created. Tech billionaires convinced we live in the Matrix are secretly funding scientists to help break us out of it. 1/43 Designed by Pierpaolo Lazzarini from Italian company Jet Capsule.

The I.F.O. is fuelled by eight electric engines, which is able to push the flying object to an estimated top speed of about 120mph. Stanford researchers invent tech workaround to net neutrality fights. Engineers at Stanford University have invented a new technology that would give broadband customers more control over their pipes and, they say, possibly put an end to a stale net neutrality debate in the U.S.

The new technology, called Network Cookies, would allow broadband customers to decide which parts of their network traffic get priority delivery and which parts are less time sensitive. A broadband customer could then decide video from Netflix should get preferential treatment over email messages, for example. The technology could put an end to the current net neutrality debate focused on whether broadband providers are allowed to prioritize some network traffic and block or degrade other traffic, said the researchers, Professors Nick McKeown and Sachin Katti and electrical engineering grad student Yiannis Yiakoumis.

The technology puts the control in the hands of broadband users, Yiakoumis said by email. Court Backs Rules Treating Internet as Utility, Not Luxury. Video WASHINGTON — High-speed internet service can be defined as a utility, a federal court has ruled, a decision clearing the way for more rigorous policing of broadband providers and greater protections for web users.

The decision from a three-judge panel at the United States Court of Appeals for the District of Columbia Circuit on Tuesday comes in a case about rules applying to a doctrine known as net neutrality, which prohibit broadband companies from blocking or slowing the delivery of internet content to consumers. Those rules, created by the Federal Communications Commission in early 2015, started a huge legal battle as cable, telecom and wireless internet providers sued to overturn regulations that they said went far beyond the F.C.C.’s authority and would hurt their businesses. Man Vs Machine: At Wipro, Artificial Intelligence Is About To Do The Job Of 3000 Engineers. The future is uncertain.

At least for the 3,000 software engineers whose tasks are about to be replaced by an artificial intelligence (AI) tool at Wipro. Mint’s Varun Sood reports that Wipro is all set to use “Holmes,” its artificial intelligence wizard to automate certain projects and this, in turn, will free up 3,000 engineers from "mundane" software maintenance jobs. U.S. government agencies are still using Windows 3.1, floppy disks and 1970s computers. Some U.S. government agencies are using IT systems running Windows 3.1, the decades-old COBOL and Fortran programming languages, or computers from the 1970s.

A backup nuclear control messaging system at the U.S. Department of Defense runs on an IBM Series 1 computer, first introduced in 1976, and uses eight-inch floppy disks, while the Internal Revenue Service's master file of taxpayer data is written in assembly language code that's more than five decades old, according to a new report from the Government Accountability Office. Some agencies are still running Windows 3.1, first released in 1992, as well as the newer but unsupported Windows XP, Representative Jason Chaffetz, a Utah Republican, noted during a Wednesday hearing on outdated government IT systems. Hammarstrom. Microsoft deletes 'teen girl' AI after it became a Hitler-loving sex robot within 24 hours.

Minecraft to run artificial intelligence experiments. Image copyright Microsoft Minecraft is to become a testing ground for artificial intelligence experiments.

Microsoft, owner of the popular video game, revealed that computer scientists and amateurs will be able to evaluate and develop AI software using its virtual landscapes from July. The company says Minecraft is more "sophisticated" than existing AI research simulations and cheaper to use than building a robot. One expert said it had great potential. Inside the Artificial Universe That Creates Itself.

Every particle in the universe is accounted for.

The precise shape and position of every blade of grass on every planet has been calculated. Every snowflake and every raindrop has been numbered. Researchers develop ‘Superman memory crystal’ that could store 360TB of data forever. Researchers at the University of Southampton’s Optoelectronics Research Center have developed a new form of data storage that could potentially survive for billions of years. The research consists of nanostructured glass that can record digital data in five dimensions using femtosecond laser writing.

The crystal storage contains 360TB per disc and is stable at up to 1,000 degrees celsius. You record data using an ultra-fast laser that produces short and intense pulses of light — on the order of one quadrillionth of a second each — and it writes the file in fused quartz, in three layers of nanostructured dots separated by five micrometers. Reading the data back requires pulsing the laser again, and recording the polarization of the waves with an optical microscope and polarizer. The five dimensions consist of the size and orientation in addition to the three-dimensional position of the nanostructures. 'Pharma Bro' Martin Shkreli's Pricing of Chagas Treatment Hurts Latinos. After dropping $2 million on a Wu-Tang Clan album, the pharmaceutical executive Martin Shkreli has found a new project: making an essential treatment unaffordable for poor immigrants from Latin America.

Shkreli, otherwise known as “pharma bro,” gained notoriety earlier this year when his company, Turing Pharmaceuticals, increased the price of a drug used to treat AIDS patients from around $13.50 to $750. He’s now the CEO of KaloBios Pharmaceuticals, which recently announced its plans to submit benznidazole, a treatment for Chagas disease purchased earlier this month, for Food and Drug Administration approval next year. The Centers for Disease Control and Prevention estimates that about 300,000 people in the United States have the deadly disease. MIT researchers create file system guaranteed not to lose data even if a PC crashes. Have you ever been working when the power goes poof and then you’ve lost all the data since you last saved? The day may be coming when you never have to worry about losing data again if a computer crashes thanks to a new “file system that could lead to computers guaranteed never to lose your data.”

Big brains at MIT have come up with “the first file system that is mathematically guaranteed not to lose track of data during crashes.” MIT's Electrical Engineering and Computer Science (EECS) Department announced that MIT researchers will present their new research on “Using Crash Hoare logic for Certifying the FSCQ File System” at the 25th ACM Symposium on Operating Systems Principles (SOSP'15) in October. Adam Chlipala, MIT Associate Professor of Computer Science, described the research paper as: FSCQ is a novel file system with a machine-checkable proof (using the Coq Proof Assistant) that its implementation meets its specification, even under crashes.

Researchers show computers can be hijacked to send data as sound waves. A team of security researchers has demonstrated the ability to hijack standard equipment inside computers, printers and millions of other devices in order to send information out of an office through sound waves. The attack program takes control of the physical prongs on general-purpose input/output circuits and vibrates them at a frequency of the researchers’ choosing, which can be audible or not.

The vibrations can be picked up with an AM radio antenna a short distance away. For decades, spy agencies and researchers have sought arcane ways of extracting information from keyboards and the like, successfully capturing light, heat and other emanations that allow the receivers to reconstruct content. The new makeshift transmitting antenna, dubbed “Funtenna” by lead researcher Ang Cui of Red Balloon Security, adds another potential channel that likewise be would be hard to detect because no traffic logs would catch data leaving the premises. Reuters. These Are What the Google Artificial Intelligence’s Dreams Look Like.