Methodes d ensemble 4on1. A gentle introduction to decision trees using R. Introduction Most techniques of predictive analytics have their origins in probability or statistical theory (see my post on Naïve Bayes, for example).

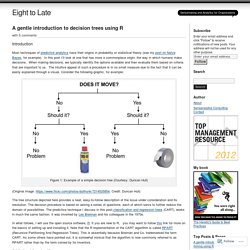

In this post I’ll look at one that has more a commonplace origin: the way in which humans make decisions. When making decisions, we typically identify the options available and then evaluate them based on criteria that are important to us. The intuitive appeal of such a procedure is in no small measure due to the fact that it can be easily explained through a visual. Consider the following graphic, for example: Methodes d ensemble 4on1. Réseau de neurones artificiels. Un réseau de neurones artificiels, ou réseau neuronal artificiel, est un système dont la conception est à l'origine schématiquement inspirée du fonctionnement des neurones biologiques, et qui par la suite s'est rapproché des méthodes statistiques[1].

Les réseaux de neurones sont généralement optimisés par des méthodes d’apprentissage de type probabiliste, en particulier bayésien. Ils sont placés d’une part dans la famille des applications statistiques, qu’ils enrichissent avec un ensemble de paradigmes [2] permettant de créer des classifications rapides (réseaux de Kohonen en particulier), et d’autre part dans la famille des méthodes de l’intelligence artificielle auxquelles ils fournissent un mécanisme perceptif indépendant des idées propres de l'implémenteur, et fournissant des informations d'entrée au raisonnement logique formel (voir Deep Learning). Historique[modifier | modifier le code]

The Nature of Code. “You can’t process me with a normal brain.” — Charlie Sheen We’re at the end of our story.

This is the last official chapter of this book (though I envision additional supplemental material for the website and perhaps new chapters in the future). We began with inanimate objects living in a world of forces and gave those objects desires, autonomy, and the ability to take action according to a system of rules. Next, we allowed those objects to live in a population and evolve over time. Les reseaux de neurones artificiels. 100 Greatest Guitarists. Paul Simon, the great wordsmith, speaks as vividly through his guitar as his lyrics.

Weaned on early doo-wop and rock & roll, Simon got caught up in the folk revival during the mid-Sixties, traveling to England to study the acoustic mastery of Bert Jansch. He has continued absorbing new influences, as on "Dazzling Blue," off his most recent album, So Beautiful or So What: "All that folk fingerpicking is what I did with Simon and Garfunkel, but [here] it's on top of this rhythm with Indian musicians playing in 12/8.

" At 70, he's as nimble as ever. Neural networks and deep learning. The human visual system is one of the wonders of the world.

Consider the following sequence of handwritten digits: Most people effortlessly recognize those digits as 504192. That ease is deceptive. In each hemisphere of our brain, humans have a primary visual cortex, also known as V1, containing 140 million neurons, with tens of billions of connections between them. And yet human vision involves not just V1, but an entire series of visual cortices - V2, V3, V4, and V5 - doing progressively more complex image processing.

The difficulty of visual pattern recognition becomes apparent if you attempt to write a computer program to recognize digits like those above. UseR-machine-learning-tutorial/deep-neural-networks.Rmd at master · ledell/useR-machine-learning-tutorial. UseR-machine-learning-tutorial/deep-neural-networks.Rmd at master · ledell/useR-machine-learning-tutorial. Mind: How to Build a Neural Network (Part One) Artificial neural networks are statistical learning models, inspired by biological neural networks (central nervous systems, such as the brain), that are used in machine learning.

These networks are represented as systems of interconnected “neurons”, which send messages to each other. The connections within the network can be systematically adjusted based on inputs and outputs, making them ideal for supervised learning. Neural networks can be intimidating, especially for people with little experience in machine learning and cognitive science! However, through code, this tutorial will explain how neural networks operate.

By the end, you will know how to build your own flexible, learning network, similar to Mind. The only prerequisites are having a basic understanding of JavaScript, high-school Calculus, and simple matrix operations. A neural network is a collection of “neurons” with “synapses” connecting them. The circles represent neurons and lines represent synapses. Neural Networks using R – BI Corner. The intent of this article is not to tell you everything you wanted to know about artificial neural networks (ANN) and were afraid to ask.

For that you’ll have to ask someone else. Here I only intend to tell you how you might use R to implement an ANN model. One thing I will say is that I rarely use an ANN. I have found them to work best in an ensemble model (using averaging) with logistics regression models. neuralnet depends on two other packages: grid and MASS (Venables and Ripley, 2002). The function neuralnet, used for training a neural network, provides the opportunity to define the required number of hidden layers and hidden neurons according to the needed complexity. Formula, a symbolic description of the model to be fitted (see above).

The usage of neuralnet is described by modeling the relationship between the case-control status (case) as response variable and the four covariates age, parity, induced and spontaneous. Nn.bp and nn.nnet show equal results. ST4003 Lab5 Introduction to Neural Networks. RJournal 2010 1 Guenther Fritsch. Neural network – R is my friend. I’ve made quite a few blog posts about neural networks and some of the diagnostic tools that can be used to ‘demystify’ the information contained in these models.

Frankly, I’m kind of sick of writing about neural networks but I wanted to share one last tool I’ve implemented in R. I’m a strong believer that supervised neural networks can be used for much more than prediction, as is the common assumption by most researchers. I hope that my collection of posts, including this one, has shown the versatility of these models to develop inference into causation. To date, I’ve authored posts on visualizing neural networks, animating neural networks, and determining importance of model inputs. This post will describe a function for a sensitivity analysis of a neural network. The general goal of a sensitivity analysis is similar to evaluating relative importance of explanatory variables, with a few important distinctions. Here’s what the model looks like: Cheers, Marcus 1Garson GD. 1991. Update: R-Session 11 - Statistical Learning - Neural Networks. Neuralnet. The 10 Algorithms Machine Learning Engineers Need to Know. By James Le, New Story Charity.

It is no doubt that the sub-field of machine learning / artificial intelligence has increasingly gained more popularity in the past couple of years. As Big Data is the hottest trend in the tech industry at the moment, machine learning is incredibly powerful to make predictions or calculated suggestions based on large amounts of data. Some of the most common examples of machine learning are Netflix’s algorithms to make movie suggestions based on movies you have watched in the past or Amazon’s algorithms that recommend books based on books you have bought before. So if you want to learn more about machine learning, how do you start? For me, my first introduction is when I took an Artificial Intelligence class when I was studying abroad in Copenhagen.

Fitting a neural network in R; neuralnet package. Neural networks have always been one of the most fascinating machine learning model in my opinion, not only because of the fancy backpropagation algorithm, but also because of their complexity (think of deep learning with many hidden layers) and structure inspired by the brain.

Neural networks have not always been popular, partly because they were, and still are in some cases, computationally expensive and partly because they did not seem to yield better results when compared with simpler methods such as support vector machines (SVMs). Nevertheless Neural Newtorks have, once again, raised attention and become popular. In this post we are going to fit a simple neural network using the neuralnet package and fit a linear model as a comparison. The dataset We are going to use the Boston dataset in the MASS package. R Code Example for Neural Networks. See also NEURAL NETWORKS. In this past June’s issue of R journal, the ‘neuralnet’ package was introduced. I had recently been familiar with utilizing neural networks via the ‘nnet’ package (see my post on Data Mining in A Nutshell) but I find the neuralnet package more useful because it will allow you to actually plot the network nodes and connections. (it may be possible to do this with nnet, but I’m not aware of how).The neuralnet package was written primarily for multilayer perceptron architectures, which may be a limitation if you are interested in other architectures.

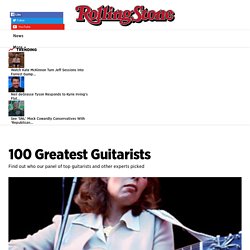

The data set used was a default data set found in the package ‘datasets’ and consisted of 248 observations and 8 variables: Neural Networks with R – A Simple Example. In this tutorial a neural network (or Multilayer perceptron depending on naming convention) will be build that is able to take a number and calculate the square root (or as close to as possible). Later tutorials will build upon this to make forcasting / trading models.The R library ‘neuralnet’ will be used to train and build the neural network. There is lots of good literature on neural networks freely available on the internet, a good starting point is the neural network handout by Dr Mark Gayles at the Engineering Department Cambridge University it covers just enough to get an understanding of what a neural network is and what it can do without being too mathematically advanced to overwhelm the reader.

The tutorial will produce the neural network shown in the image below. It is going to take a single input (the number that you want square rooting) and produce a single output (the square root of the input). The middle of the image contains 10 hidden neurons which will be trained.