Recurrent Neural Networks Tutorial, Part 1 – Introduction to RNNs – WildML Recurrent Neural Networks (RNNs) are popular models that have shown great promise in many NLP tasks. But despite their recent popularity I’ve only found a limited number of resources that throughly explain how RNNs work, and how to implement them. That’s what this tutorial is about. As part of the tutorial we will implement a recurrent neural network based language model. I’m assuming that you are somewhat familiar with basic Neural Networks. What are RNNs? The idea behind RNNs is to make use of sequential information. A recurrent neural network and the unfolding in time of the computation involved in its forward computation. The above diagram shows a RNN being unrolled (or unfolded) into a full network. There are a few things to note here: You can think of the hidden state as the memory of the network. What can RNNs do? RNNs have shown great success in many NLP tasks. Language Modeling and Generating Text since we want the output at step to be the actual next word. Machine Translation .

CNTK, el nuevo paquete de herramientas de aprendizaje profundo de código abierto de Microsoft en GitHub - El blog de Windows para América Latina Microsoft ha comenzado a fabricar las herramientas que sus propios investigadores usan para acelerar los avances en inteligencia artificial que estén disponibles para un amplio grupo de desarrolladores, al lanzar su Paquete de Herramientas de Red Computacional en GitHub. Los investigadores desarrollaron este paquete de herramienta de código abierto, apodado CNTK, por necesidad. Xuedong Huang, jefe científico de habla en Microsoft, dijo que él y su equipo estaban ansiosos por realizar mejoras más rápidas en las formas en las que las computadoras entienden el habla, y cómo las herramientas con las que tenían que trabajar los retrasaban. Así que un grupo de voluntarios se prepararon para resolver este problema por sí solos, con ayuda de una solución casera que resaltó el rendimiento sobre todo lo demás. El esfuerzo rindió frutos. “El paquete de herramientas CNTK es mucho más eficiente que cualquier otra que hemos visto”, Huang dijo. Xuedong Huang (fotografía por Scott Eklund/Red Box Pictures

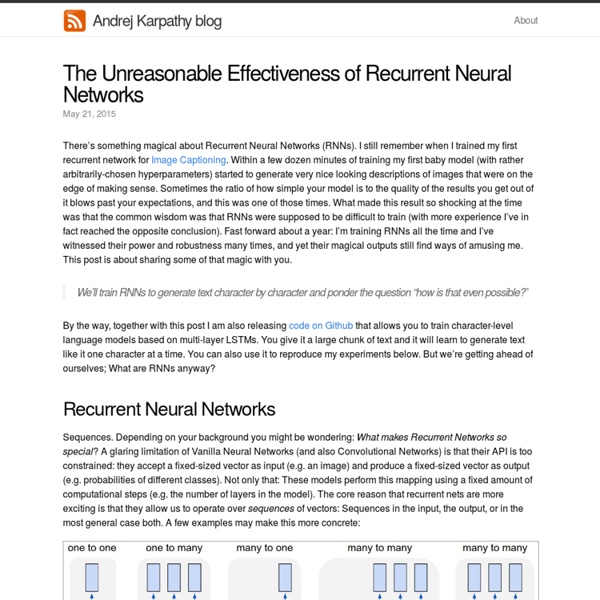

Understanding LSTM Networks -- colah's blog Posted on August 27, 2015 Recurrent Neural Networks Humans don’t start their thinking from scratch every second. As you read this essay, you understand each word based on your understanding of previous words. You don’t throw everything away and start thinking from scratch again. Traditional neural networks can’t do this, and it seems like a major shortcoming. Recurrent neural networks address this issue. Recurrent Neural Networks have loops. In the above diagram, a chunk of neural network, , looks at some input and outputs a value . These loops make recurrent neural networks seem kind of mysterious. An unrolled recurrent neural network. This chain-like nature reveals that recurrent neural networks are intimately related to sequences and lists. And they certainly are used! Essential to these successes is the use of “LSTMs,” a very special kind of recurrent neural network which works, for many tasks, much much better than the standard version. The Problem of Long-Term Dependencies Conclusion

Los intereses comerciales marcan el futuro de la inteligencia artificial | Ciencia El futuro de la inteligencia artificial genera muchos debates porque será decisiva en campos tan serios como la medicina, las guerras, el trabajo o incluso las relaciones humanas. Sin embargo, esos debates a menudo ignoran un asunto que sobrevuela a todos los demás: el desarrollo de las máquinas pensantes ha sido conquistado por empresas tecnológicas que están definiendo cómo será ese futuro. Compañías como Google, Facebook, Amazon, Microsoft, Apple e IBM fichan a los mejores expertos en inteligencia artificial de todo el mundo, esquilman departamentos universitarios enteros para cubrir sus necesidades, compran las empresas incipientes del sector y marcan el rumbo de la investigación con becas y ayudas. Así, un campo científico tan determinante como la inteligencia artificial puede estar volcado excesivamente en los intereses comerciales de estos negocios. ampliar foto No es solo compartir centro de trabajo con los mejores. Control sobre la academia

Understanding Machine Learning Infographic Other Infographics Understanding Machine Learning Infographic Understanding Machine Learning Infographic We now live in an age where machines can teach themselves without human intervention. What It Is Machine learning (ML) deals with systems and algorithms that can learn from various data and make predictions. Theory The main goal of a learner is to generalize, and a learning machine able to do that can perform accurately on new or unforeseen tasks. History In the early days of AI, researchers were very interested in machines that could learn from data. How It Is Done Supervised ML – relies on data where the true label is indicated. Approaches There are over a dozen approaches employed in ML, Some of these include: Applications The importance of ML is that, since it’s data-driven, it can be trained to create valuable predictive models that can guide proper decisions and smart actions. Embed This Education Infographic on your Site or Blog!

The Difference Between AI, Machine Learning, and Deep Learning? This is the first of a multi-part series explaining the fundamentals of deep learning by long-time tech journalist Michael Copeland. Artificial intelligence is the future. Artificial intelligence is science fiction. Artificial intelligence is already part of our everyday lives. All those statements are true, it just depends on what flavor of AI you are referring to. For example, when Google DeepMind’s AlphaGo program defeated South Korean Master Lee Se-dol in the board game Go earlier this year, the terms AI, machine learning, and deep learning were used in the media to describe how DeepMind won. The easiest way to think of their relationship is to visualize them as concentric circles with AI — the idea that came first — the largest, then machine learning — which blossomed later, and finally deep learning — which is driving today’s AI explosion — fitting inside both. From Bust to Boom Over the past few years AI has exploded, and especially since 2015. Good, but not mind-bendingly great.

Google researchers teach AIs to see the important parts of images — and tell you about them | TechCrunch This week is the Computer Vision and Pattern Recognition conference in Las Vegas, and Google researchers have several accomplishments to present. They’ve taught computer vision systems to detect the most important person in a scene, pick out and track individual body parts and describe what they see in language that leaves nothing to the imagination. First, let’s consider the ability to find “events and key actors” in video — a collaboration between Google and Stanford. Footage of scenes like basketball games contain dozens or even hundreds of people, but only a few are worth paying attention to. The CV system described in this paper uses a recurrent neural network to create an “attention mask” for every frame, then track relevance of each object as time proceeds. Over time the system is able to pick out not only the most important actor, but potential important actors, and the events with which they are associated. Featured Image: Omelchenko/Shutterstock

Classification with Feed-Forward Neural Networks — PyBrain v0.3 documentation This tutorial walks you through the process of setting up a dataset for classification, and train a network on it while visualizing the results online. First we need to import the necessary components from PyBrain. from pybrain.datasets import ClassificationDataSetfrom pybrain.utilities import percentErrorfrom pybrain.tools.shortcuts import buildNetworkfrom pybrain.supervised.trainers import BackpropTrainerfrom pybrain.structure.modules import SoftmaxLayer Furthermore, pylab is needed for the graphical output. from pylab import ion, ioff, figure, draw, contourf, clf, show, hold, plotfrom scipy import diag, arange, meshgrid, wherefrom numpy.random import multivariate_normal To have a nice dataset for visualization, we produce a set of points in 2D belonging to three different classes. Randomly split the dataset into 75% training and 25% test data sets. tstdata, trndata = alldata.splitWithProportion( 0.25 ) trndata. Test our dataset by printing a little information about it.

AI just defeated a human fighter pilot in an air combat simulator Retired United States Air Force Colonel Gene Lee recently went up against ALPHA, an artificial intelligence developed by a University of Cincinnati doctoral graduate. The contest? A high-fidelity air combat simulator. And the Colonel lost. In fact, all the other AI’s that the Air Force Research Lab had in their possession also lost to ALPHA…and so did all of the other human experts who tried their skills against ALPHA’s superior algorithms. And did we mention ALPHA achieves superiority while running on a $US35 Raspberry Pi? Saying that Lee is experienced when it comes to aerial combat is a remarkable understatement. Yet, he was not successful in winning against ALPHA. "I was surprised at how aware and reactive it was. ALPHA makes decisions using a genetic fuzzy tree system, which is a subtype of fuzzy logic algorithms. The future of air combat UC grad and Psibernetix President and CEO Nick Ernest, David Carroll, and Gene Lee (seated).

Alzheimer’s Prediction May Be Found in Writing Tests Is it possible to predict who will develop Alzheimer’s disease simply by looking at writing patterns years before there are symptoms? According to a new study by IBM researchers, the answer is yes. And, they and others say that Alzheimer’s is just the beginning. For the Alzheimer’s study, the researchers looked at a group of 80 men and women in their 80s — half had Alzheimer’s and the others did not. The men and women were participants in the Framingham Heart Study, a long-running federal research effort that requires regular physical and cognitive tests. The researchers examined the subjects’ word usage with an artificial intelligence program that looked for subtle differences in language. The members of that group turned out to be the people who developed Alzheimer’s disease. “We had no prior assumption that word usage would show anything,” said Ajay Royyuru, vice president of health care and life sciences research at IBM Thomas J. “What is going on here is very clever ” said Dr. Dr.