About the Data Science Association. Your Information is a Product. Most organizations today still treat data as a raw material to be mined, with industrial processes for staged production.

Big Data. Big data analytics guide for data architects. World's Biggest Data Breaches & Hacks Information Is Beautiful. Let us know if we missed any big data breaches. » 70% of passwords are in this chart.

Is yours? » Safely check if your details have been compromised in any recent data breaches: » See the data: bit.ly/bigdatabreaches This interactive ‘Balloon Race’ code is powered by our forthcoming VizSweet software – a set of high-end dataviz tools for generating interactive visualisations. You might also like: Machine Data. Natalino Busa. Parquet. Cloudera/Impala. Yahoo/storm-yarn.

Summingbird: Streaming MapReduce at Twitter. Lambdoop, a framework for easy development of big data applications. Storm, distributed and fault-tolerant realtime computation. Why Content Analytics Will Tell You A Lot More Than Business Intelligence. Of course you know all about web analytics or social media analytics. Earlier I described the three different “…tives” in analytics that are also very important to know, but there is another type of analytics that cannot be overlooked.

In Gartner’s Hype Cycle of Emerging Technologies they place Content Analytics at the end of the “Peak of Inflated Expectations” and they expect it to take another 5-10 years before it reaches the “Plateau of Productivity”. But what is Content Analytics, what makes it so special that Gartner includes it and why should you be paying attention to it?

Content analytics can be defined as unlocking business value from unstructured content via semantic technologies to find answers to important questions or discover causes to certain trends. Companies can use content analytics to understand the content that is created, how it is used, the context it is in and the nature of that content. There are several benefits from content analytics. Image: Skane. People. Transforming. Data. The Big Data Insight Group. Big Data: Turning disparate types of data into actionable intelligence. Big Data describes the process of extracting actionable intelligence from disparate, and often times non-traditional, data sources.

These data sources may include structured data such as databases, sensor, click stream and location data, as well as unstructured data like email, HTML, social data and images. The actionable data may be represented visually (e.g. in a graph), but it is often distilled down to a structured format, which is then stored in a database for further manipulation. The sheer size of data being collected is more than traditional compute infrastructures can handle; exceeding the capacities of databases, storage, networks and everything in between. Extracting actionable intelligence from BigData requires handling large amounts of disparate data and processing it very quickly. Flash Memory Systems, FAST Flash. Maestro 2510 Violin Maestro 2510 provide flash based application acceleration, tiering, migration and data protection services.

Read More Windows Flash Array The Windows Flash Array delivers amazing performance, superior scalability, and simplified management for Windows environments. Read More Symphony. Blog. Patterns in Navigation Paths Introduction When you describe a path, you probably imagine something like “X -Y-> Z” to describe a “Y” relationship between two objects, “X” and “Z”.

Describing the path result that you want to see this way is simple and intuitive, but representing this result programmatically through a navigation API can be rather difficult unless you have a supported path syntax that can capture this intuitive expressiveness. Imagine querying using this type of syntax to find paths of a certain pattern: consider paths across multiple social network datasets where you would like to find the pattern “” which may represent a compile-level dependency on a certain native library.

This intuitive way of describing a path was the motivation for the new path matching syntax in InfiniteGraph 3.2! Describing the Problem. The Maturing of Big Data: From Herding Cats to Taming Tigers. You can’t declare something mature until it has stopped developing.

By that criterion, the big-data market is far from mature. It continues to foster an impressive amount of innovation into new types of databases, analytical approaches and applications that infuse data-driven optimization into every aspect of our existence. Today’s big-data space is a sprawling menagerie of innovative database architectures for the new world of Internet-centric computing. Accent on the “sprawling.” Supply Chain Necessities for 2013 and Beyond. Sean Riley, Director of Supply Chain Innovation for Software AG and writing for Supply Chain Management Review, recounted some of the supply chain trends that shaped 2012.

In doing so, he began by pointing out that as supply chain technologies continued to evolve in 2012, more and more companies realized the inevitable fact that supply chain visibility was the key factor and necessity “for success in today’s business economy.” It is the fortunate emergence of cloud-based supply chain tools and the increasing willingness of enterprises to adopt cloud-based strategies that is beginning to make near real-time supply chain visibility and broader collaboration possible for small to mid-sized business manufacturers and distributors.

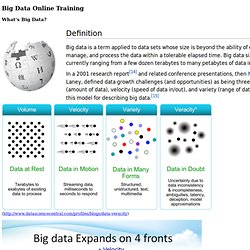

Many of these SMEs may see the value of end-to-end supply chain visibility and exhibit a heartfelt desire to participate in secure data flows between collaborative partners; however, they lack the information technology assets to build the linkages themselves. Untitled. What's Big Data?

( Big Data Market Size And Vendor Revenues. By Jeff Kelly with David Vellante and David Floyer This is the 2011 report, originally published on February 15, 2012.

See Big Data Vendor Revenue and Market Forecast 2012-2017 for the 2012 update. The Big Data market is on the verge of a rapid growth spurt that will see it top the $50 billion mark worldwide within the next five years. Big Data Part II. Steve LeSueur [Executive Host] Contributing Editor, 1105 Government Information Group Robert Ames Senior Vice President, Information and Communications Technologies, In-Q-Tel Robert Ames is a Senior Vice President, Information and Communications Technologies at In-Q-Tel.

In this role, he leads the practice in in setting the technical strategy, objectives, and investment directions to support the mission needs of the Intelligence Community. Data management - PwC New Zealand. Our national Data Management Group (DMG) uses a variety of sophisticated data analysis tools and techniques to provide our clients with valuable information about how their processes can be improved. We have successfully applied data analytics to help our clients: Identify fraud risks or a breakdown in internal controls, for example, collusion between vendors and employees, duplicate payments, breaches in delegated authority or unusual transactions.

Prioritise their internal audit resources. Recover GST overpayments, leverage vendor spend, benchmark performance internally and externally, and optimise business processes. Identify revenue leakage. Big Data Bracketology. We are officially in one of my favorite months of the year – March Madness! It should be March every month, except when it’s December…but that’s a whole other story. This March, IBM big data is bringing their “Smart Sixteen” teams from the Marketing, Finance, IT and Infrastructure regions to the big data dance! The question on everyone’s mind is which teams will make it to the final show down as the most important consideration in realizing business value through big data. Pundits, analysts, consultants, CEOs, CMOs, CFOs, COOs, CIOs – did I miss any O’s? – a bracketeers across the social media world are chafing at the bit to throw their bracket picks into the big data dance.

So let’s break down the “Smart Sixteen” teams in the big data dance by the four regions: The dark side of Customer Analytics! Big Data investment map. Data and Analytics. Some weeks ago I gave a talk about the "Dark Side of Open Data" at the Open Data Institute, where I predicted that the major beneficiaries of government data were not going to be private citizens, taxpayers, or enthusiastic small startups, but large enterprises with deep pockets and less than altruistic service models.

The slide I used noted that history tells us any potential goldmine will be mined, and the obvious business model would be: - Triangulation of Open Data sources and other freely available data (Anonymisation is bunk)- Buy other private (maybe "lost") data for triangulation (Anonymisation is bunk part II) - Use Open Data, together with Big Computing to drive products for commercial purposes As to who would do this, the question I posed was "Which side are all the sharpest knives on?

". Efficient Construction Project Delivery Methods - Sustainability - 3D, 4D, 5D BIM - IPD, JOC, SABER, IDIQ, SATOC, MATOC, MACC, POCA .. ‘Building Information Modelling (BIM) is digital representation of physical and functional characteristics of a facility creating a shared knowledge resource for information about it forming a reliable basis for decisions during its life cycle, from earliest conception to demolition.” “BIM provides a common environment for all information defining a building, facility or asset, together with its common parts and activities.This includes building shape, design and construction time, costs, physical performance, logistics and more.

More importantly, the information relates to the intended objects (components) and processes, rather than relating to the appearance and presentation of documents and drawings.More traditional 2D or 3D drawings may well be outputs of BIM, however, instead of generating in the conventional way ie. as individual drawings, could all be produced directly from the model as a “view” of the required information.” – RICS.

Data Warehousing. Grant Thornton - Business intelligence. Bigdataq.com. –Big Data 2011 Preview « @Zettaforce. During the 2011 National Football League (NFL) playoff TV broadcasts — amid commercials with Anheuser-Busch Clydesdales and auto racing driver Danica Patrick — an ad appeared with an IBM researcher talking about data analytics. In the IBM TV ad, Dr. David Ferrucci discusses how an IBM Watson supercomputer competes in a Jeopardy! Open Thoughts on Software, Business, Life. The Bigger Truth, Steve Duplessie. Efficient Construction Project Delivery Methods - Sustainability - 3D, 4D, 5D BIM - IPD, JOC, SABER, IDIQ, SATOC, MATOC, MACC, POCA ..

In 2010 the amount of data collected since the dawn of humanity all the way up until 2003 was equivalent to the volume produced every two days in the new age of information. - Eric Schmidt, Chairman of Google “Big data” — the ability to acquire, process and sort vast quantities of information for timely decision support is critical to the efficient life-cycle management of the built environment. Big Data Analytics - Datameer Big Data. Big Data Analytics Use Cases. Big Data Market Size And Vendor Revenues. Open Source Distributed Real Time Search & Analytics. Flutura + M2M + Big Data Analytics = Blue Ocean Opportunities. Column Store. By Colin Mahony and Shilpa Lawande Part II – Understanding the Simplicity of Projections and the Vertica Database Designer™ In Part I of this post , we introduced you to the simple concept of Vertica’s projections. Now that you have an understanding of what they are, we wanted to go into more detail on how users interface with them, and introduce you to Vertica’s unique Database Designer tool.

For each table in the database, Vertica requires a minimum of one projection, called a “superprojection”. A superprojection is a projection for a single table that contains all the columns and rows in the table. To get your database up and running quickly, Vertica automatically creates a default superprojection for each table created through the CREATE TABLE and CREATE TEMPORARY TABLE statements.

By creating a superprojection for each table in the database, Vertica ensures that all SQL queries can be answered. 1. Solutions. Finally a database is fast enough and powerful enough to replace all the Band-Aids of real-time data management solutions. We have caches because the database isn’t fast enoughWe have stream processing because the database isn’t fast enoughWe batch ETL because the database isn’t fast enoughWe use old data to make current decisions because the database isn’t fast enough What if you had a database, the right tool for all these jobs, that was fast enough, scalable enough and reliable enough to remove the dependency on all these work arounds.

Well, … that’s precisely why the fastest applications in the world run on VoltDB. From applications developed to save human lives to helping mobile game developers build blockbusters, VoltDB is a developer’s dream when building applications that scale. Commercial and Open Source Big Data Platforms Comparison. Curator's note: This post was authored by Lee Kyu Jae. Dealing with Future Problems. Self Service Business Intelligence, Analytics und Performance Management. Cc-wiki-dump « Blog – Stack Exchange. All content contributed to the Stack Exchange network is licensed under cc-wiki (aka cc-by-sa). Big Data & Business Strategy Consulting - NewVantage Partners.

Information. Streaming CEP. Products. Hadoop. Why HPCC is a superior alternative to Hadoop. Login Register Lost Password? Contact Us Blog Home Home Why HPCC HPCC Vs Hadoop Why HPCC is a superior alternative to Hadoop Enterprise Ready Batteries included: All components are included in a consistent and homogeneous platform – a single configuration tool, a complete management system, seamless integration with existing enterprise monitoring systems and all the documentation needed to operate the environment is part of the package.

Back to Summary Beyond MapReduce. Hpcc-systems (HPCC Systems) Platforms for Big Data.