How-To: Python Compare Two Images. Would you have guessed that I’m a stamp collector?

Just kidding. I’m not. But let’s play a little game of pretend. Let’s pretend that we have a huge dataset of stamp images. And we want to take two arbitrary stamp images and compare them to determine if they are identical, or near identical in some way. In general, we can accomplish this in two ways. The first method is to use locality sensitive hashing, which I’ll cover in a later blog post. The second method is to use algorithms such as Mean Squared Error (MSE) or the Structural Similarity Index (SSIM). In this blog post I’ll show you how to use Python to compare two images using Mean Squared Error and Structural Similarity Index. OpenCV and Python versions: This example will run on Python 2.7/Python 3.4+ and OpenCV 2.4.X/OpenCV 3.0+.

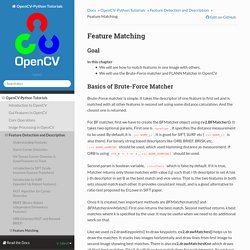

Let’s start off by taking a look at our example dataset: Figure 1: Our example image dataset. Let’s take a look at the Mean Squared error equation: Equation 1: Mean Squared Error. Feature Matching — OpenCV-Python Tutorials 1 documentation. Basics of Brute-Force Matcher¶ Brute-Force matcher is simple.

It takes the descriptor of one feature in first set and is matched with all other features in second set using some distance calculation. And the closest one is returned. For BF matcher, first we have to create the BFMatcher object using cv2.BFMatcher(). It takes two optional params. Second param is boolean variable, crossCheck which is false by default. Once it is created, two important methods are BFMatcher.match() and BFMatcher.knnMatch(). Like we used cv2.drawKeypoints() to draw keypoints, cv2.drawMatches() helps us to draw the matches. Let’s see one example for each of SURF and ORB (Both use different distance measurements). Brute-Force Matching with ORB Descriptors¶ Here, we will see a simple example on how to match features between two images. We are using SIFT descriptors to match features.

Below is the result I got: Template Matching — OpenCV-Python Tutorials 1 documentation. Goals¶ In this chapter, you will learn To find objects in an image using Template MatchingYou will see these functions : cv2.matchTemplate(), cv2.minMaxLoc() Theory¶ Template Matching is a method for searching and finding the location of a template image in a larger image.

OpenCV comes with a function cv2.matchTemplate() for this purpose. If input image is of size (WxH) and template image is of size (wxh), output image will have a size of (W-w+1, H-h+1). Note If you are using cv2.TM_SQDIFF as comparison method, minimum value gives the best match. Template Matching in OpenCV¶ Here, as an example, we will search for Messi’s face in his photo.

We will try all the comparison methods so that we can see how their results look like: See the results below: Image comparison - fast algorithm. Building image search an engine using Python and OpenCV. Let’s face it.

Trying to search for images based on text and tags sucks. Whether you are tagging and categorizing your personal images, searching for stock photos for your company website, or simply trying to find the right image for your next epic blog post, trying to use text and keywords to describe something that is inherently visual is a real pain. I faced this pain myself last Tuesday as I was going through some old family photo albums there were scanned and digitized nine years ago. You see, I was looking for a bunch of photos that were taken along the beaches of Hawaii with my family. I opened up iPhoto, and slowly made my way through the photographs. Perhaps by luck, I stumbled across one of the beach photographs. After seeing this photo, I stopped my manual search and opened up a code editor.

While applications such as iPhoto let you organize your photos into collections and even detect and recognize faces, we can certainly do more. Wouldn’t that be cool? Sound interesting?