Supprimer les vieux kernels inutilisés d'un serveur proxmox. Proxmox. Proxmox Container Toolkit. Container images, sometimes also referred to as “templates” or “appliances”, are tar archives which contain everything to run a container.

You can think of it as a tidy container backup. Like most modern container toolkits, pct uses those images when you create a new container, for example: pct create 999 local:vztmpl/debian-8.0-standard_8.0-1_amd64.tar.gz Proxmox VE itself ships a set of basic templates for most common operating systems, and you can download them using the pveam (short for Proxmox VE Appliance Manager) command line utility. You can also download TurnKey Linux containers using that tool (or the graphical user interface). Our image repositories contain a list of available images, and there is a cron job run each day to download that list. LXC. Translation(s): none Linux Containers (LXC) provide a Free Software virtualization system for computers running GNU/Linux.

This is accomplished through kernel level isolation. It allows one to run multiple virtual units simultaneously. Those units, similar to chroots, are sufficiently isolated to guarantee the required security, but utilize available resources efficiently, as they run on the same kernel. For all related information visit : Full support for LXC (including userspace tools) is available since the Debian 6.0 "Squeeze" release.

Current issues in Debian 7 "Wheezy": LXC may not provide sufficient isolation at this time, allowing guest systems to compromise the host system under certain conditions Apparently this is progressing in 3.12/lxc-beta2: lxc-halt times-out (see Start and stop containers below) Proxmox et OpenVZ - Linux Server Wiki. Pour commencer, partitionnez votre disque.

Faites une partition / de 15Go, un swap, et enfin, une partition LVM2 avec l'espace restant. OpenVZ Proxmox Virtualization. After one year of operation with XEN, I chosed to move Fridu from XEN paravirtualization, to OpenVZ container model.

Here after some explanations on the why of this change and the description of my new architecture. Disclaimer Anything I wrote here was done outside of my professional work context and none of my current/past employers/customers have participate or even be consulted for this work. Fridu is 100% part of my free time, and everything including hosting is funded on our pocket money and used to support non commercial friend organisations. While I think I have the technical background to design a smart architecture (cf:my profile).

Demonstration/Video This demonstrations is a live screencast done with xvidcap on Linux, it shows how to create a new virtual machine through Proxmox OpenVZ web graphic interface, and then shows how to expose the newly created zone to the outside world with three different mechanisms: vpn, port forwarding and reverse proxy. Xen is rock solid, but ... Resource shortage. Sometimes you see strange failures from some programs inside your container.

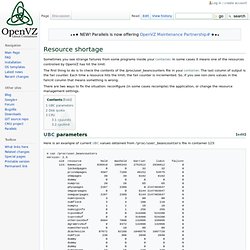

In some cases it means one of the resources controlled by OpenVZ has hit the limit. The first thing to do is to check the contents of the /proc/user_beancounters file in your container. The last column of output is the fail counter. Each time a resource hits the limit, the fail counter is incremented. So, if you see non-zero values in the failcnt column that means something is wrong. There are two ways to fix the situation: reconfigure (in some cases recompile) the application, or change the resource management settings.

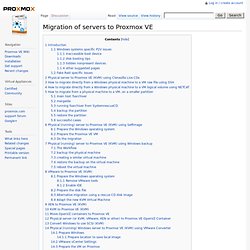

Migration of servers to Proxmox VE. You can migrate existing servers to Proxmox VE.

Moving Linux servers is always quite easy so you will not find much hints for troubleshooting here. Windows systems specific P2V issues inaccessible boot device Booting a virtual clone (IDE) of a physical Windows system partition may fail with a BSOD referring to the problem STOP: 0x0000007B (0xF741B84C,0xC0000034,0x00000000,0x00000000) INACCESSIBLE_BOOT_DEVICE this means that the source physical windows machine had no support for IDE controller, or at least the one virtually replaced by kvm (see Microsoft KB article article for details): as Microsoft suggests, create a mergeide.reg file (File:Mergeide.zip) file on the physical machine and merge that in the registry, 'before the P2V migration. Auto-hébergement : configurer un cluster Proxmox 2 sans multicast. Ou comment interconnecter, via Internet et en Unicast, plusieurs noeuds Proxmox installés sur des réseaux distincts.

MAJ 30 mars 2012 : la version stable de Proxmox VE 2 est disponible et le billet est à jour. Charlatan ! Le wiki de Proxmox 2 est formel : pas de multicast, pas de cluster Oui mais non. (D’abord…) Non seulement ça marche mais en plus, chose appréciable, la solution est plutôt élégante : suffit de faire tourner les noeuds Proxmox sur un réseau virtuel (supportant le Multicast) et d’interconnecter l’ensemble avec OpenVPN (en Unicast). Bon, là, à priori et si vous êtes normalement constitués, z’avez les yeux qui brillent et la langue qui pendouille, sans parler du filet de bave qui coule le long de votre barbe encore tâchée par la pizza de la veille.

Mais, halte camarades, rendons d’abord à César ce qui lui appartient car en fait (et sauf erreur), c’est « ned Productions Ltd » qui a dégainé le premier avec un tutorial (en Anglais) fort sympathique sur le sujet : Bon, ayé ?