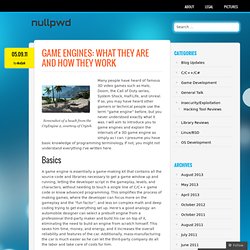

Blender 3D and Game Engine Tutorials. Blender Game Tutorial (2.6): Building a 3D Game - Part 1. Blender Game Tutorial (2.6): Building a 3D Game - Part 2. Blender Game Engine Python. Tutorials for Blender 3D and the Blender 3D Game Engine. Game Engine: Simple Character. Environment Animation in Blender 2.5 : CG Masters. Vampire masquerade redemption. Krum 1: Photo Gallery. Blender Game Engine Logic Bricks. Gallery for computer games using the Blender game engine. Blender 3D and Blender Game Engine Tutorials. How do 3d game engines render 3d environments to 2d screen. Game engines: What they are and how they work. Screenshot of a beach from the CryEngine 2, courtesy of Crytek.

Many people have heard of famous 3D video games such as Halo, Doom, the Call of Duty series, System Shock, Half-Life, and Unreal. If so, you may have heard other gamers or technical people use the term “game engine” before, but you never understood exactly what it was. I will aim to introduce you to game engines and explain the internals of a 3D game engine as simply as I can. I presume you have basic knowledge of programming terminology. If not, you might not understand everything I’ve written here. A game engine is essentially a game-making kit that contains all the source code and libraries necessary to get a game window up and running, letting the developer script in the gameplay, levels, and characters, without needing to touch a single line of C/C++ game code or know advanced programming. There are plenty of companies that license game engines for profit, and chances are you’ve probably heard of them. Py/Scripts/Import-Export/Autodesk FBX. From BlenderWiki Autodesk FBX Exporter Introduction Export selected objects to Autodesks .FBX file format.

This format is mainly use for interchanging character animations between applications and is supported applications such as Cinema4D, Maya, 3dstudio MAX, Wings3D and engines such as Unity3D and UnrealUDK. The exporter can bake mesh modifiers and animation into the FBX so the final result looks the same as blender. Usage Instructions Launch the exporter from the File → Export menu Set the options in the user interface (Default options should be okay in most situations) Press the "Export" button Select the filename to export to. FAQ: Getting skinned animated models from Blender to Unity3D. Multiple animations in the same FBXfile. Android programming - Semantic TV - Swoozy - Actionscript Flash Flex AIR - Unity 3D - DIY - Music reviews - iPhone Convert MP4 to MP3 with Automator and Garageband Wii Bala. Believe me or not Unity 3D will be the next hype in the indie game industry and once you have discovered this tool and exported your 3D scenes to your iPhone, Android or even Flash (thanks to the new Molehill support) you will understand why.

But that’s not the point of our discussion There is a topic that comes very often in forums concerning the export/import of textures in Unity 3D: how to export 3D objects or geometry made with Blender (up to 2.5) in Unity with textures? Yes with textures… The problem is that the workflow is not very clear and often textures are missing what is pretty annoying because once imported in Unity 3D you will need to assign them manually over the Material options… it’s a real pain but fortunately there is a nice solution… Let’s get started!

Low Poly Game Asset Creation - Fire Hydrant in Blender and Unity 3D. Create Low Poly, Game Ready Assets in Blender and Unity 3D!

Through this Blender and Unity tutorial you will learn how to create low poly assets for games. Over the course of this tutorial you will learn the high poly and low poly modeling techniques needed to create the fire hydrant subject matter. Exporting Characters from Blender to Unity. DragonWars, an iPad game made with Blender and Unity. DragonWars from Digital Sorcery was released for Apple iPad (iTouch version coming shortly) The game was created using Unity game engine and all of the animation and some of the modeling were done in Blender by Brian Williamson.

He also has made several tutorials for Blender and Unity available on his YouTube account. I asked him some questions about this project. How large is your team? As for my development team. It was just me. Smooth Blender -> Unity workflow. Unity and Blender - Realtime level Prototyping. Theory - Efficient planning and modular workflow. In this theory lesson we will be taking a look at some tips for planning and building modular game environments.

These theory lessons are non application specific, and although I delve into some practical examples at points throughout the video this is more focused on the theory and fundamentals. Below you will find the notes from this theory lesson for quick reference after watching the video. Reference Images used: Bots – Unity What are Modular Environments and Why do we want them? The first thing we really want to know, is what a modular environment actually is, what does it mean to be modular. Blender to Unity Workflow. FBX Import Today I set about researching better ways to integrate Blender and Unity.

So far I’ve been dragging Blender files directly into Unity’s project palette. This method, however, is seriously flawed. Every time I want to change the scene in blender, I have to manually replace all the textures for all the objects. Not only this, I must add all the colliders, scripts, and animations as well. Fortunately, I have found a better way.

Dev:Shading/Tangent Space Normal Maps. From BlenderWiki Implementation Dependent A common misunderstanding about tangent space normal maps is that this representation is somehow asset independent.

However, normals sampled/captured from a high resolution surface and then transformed into tangent space is more like an encoding. Thus to reverse the original captured field of normals the transformation used to decode would have to be the exact inverse of that which was used to encode. This presents a problem since there is no implementation standard for tangent space generation.

The math error which occurs from this mismatch between the normal map baker and the pixel shader used for rendering results in shading seams. Normal maps (and importing them correctly) When to triangulate [Archive] Hi there!!

![When to triangulate [Archive]](http://cdn.pearltrees.com/s/pic/th/triangulate-archive-polycount-73821599)

Well after a while I found out that some artists and tech artists sometimes do things out of habit without really questioning things. Especially with stuff like triangulation - it's one of these details that can easily create shading errors, but most people seem to not notice because it's 'good enough'. I personally mostly build my ingame meshes based on a loop system for one simple reason : clean loops make selection very easy (select one, and grow/shrink from there) hence it makes both UV and skin weighting much easier and faster. However when it comes to baking, things can get tricky as some apps dont even triangulate the same way before (hidden edges oriented one way) and after the bake (shown edges oriented the opposite way) so to avoid all that I tend to triangulate before the bake and keep the asset that way from that point on.

But I do keep the mostly quad version in a safe place, if I ever need to go back to it. NormalMap. What is a Normal Map?

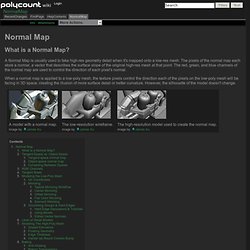

A Normal Map is usually used to fake high-res geometry detail when it's mapped onto a low-res mesh. The pixels of the normal map each store a normal, a vector that describes the surface slope of the original high-res mesh at that point. The red, green, and blue channels of the normal map are used to control the direction of each pixel's normal. When a normal map is applied to a low-poly mesh, the texture pixels control the direction each of the pixels on the low-poly mesh will be facing in 3D space, creating the illusion of more surface detail or better curvature. However, the silhouette of the model doesn't change. How important is triangulation?

Blender Basics optimising models for export and use in games. Previously we learned how Blenders interfaces is divided into working 'zones', how to navigate and move around the interface and then how to make a model and apply UVW maps as we progressed through each section of the tutorial series to make a simple chair. For the next and final section, we'll be looking in to one of the most essential, but least discussed, 'skills' of making 3D content - how to optimise it for use in a game or interactive environment (3D chat, game, virtual world and so on).

If you've jumped ahead to this final section of the Blender Basics tutorial then it's assumed you know how to use Blender and where all the buttons and shortcuts are. If you don't it's recommended that you read through the entire tutorial to get to grips with Blender before tackling this part of the process. The Golden rule of optimising ^ "Less is more" Ben Mathis - 3d videogame art. Manual/Render/Bake.

From BlenderWiki Baking, in general, is the act of pre-computing something in order to speed up some other process later down the line. Rendering from scratch takes a lot of time depending on the options you choose. Therefore, Blender allows you to "bake" some parts of the render ahead of time, for select objects. Then, when you press Render, the entire scene is rendered much faster, since the colors of those objects do not have to be recomputed. Mode: All Modes except Sculpt Panel: Scene (F10) Render Context → Bake panel Hotkey: CtrlAltB Menu: Render → Bake Render Meshes Description The Bake tab in the Render buttons panel. Render baking creates 2D bitmap images of a mesh object's rendered surface.

Use Render Bake in intensive light/shadow solutions, such as AO or soft shadows from area lights. Use Full Render or Textures to create an image texture; baked procedural textures can be used as a starting point for further texture painting. Advanced renderbump and normal map baking in Blender 3D from high poly models. Normal maps are essentially a clever way of 'faking', on low poly, low detailed models, the appearance of high resolution, highly detailed objects. Although the objects themselves are three dimensional (3D), the actual part that 'fakes' detail on the low resolution mesh is a two dimensional (2D) texture asset called a 'normal map'. What are normal maps and why use? ^ The process of producing these normal maps is usually referred to as 'baking' (or 'render to image'), whereby an application - in this instance Blender 3D - interprets the three dimensional geometrical structure of high poly objects as RGB ("Red", "Green" & "Blue") values that can then be 'written' as image data, using the UVW map of a low resolution 'game' model as a 'mask' of sorts, telling the bake process where that colour data needs to be drawn.

3DTutorials/Modeling High-Low Poly Models for Next Gen Games - Polycount Wiki. Maya 3D Modeling Tutorials for Level Design and Game Environments.