Force sensor in simulated skin and neural model mimic tactile sai afferent spiking response to ramp and hold stimuli. Force sensor in simulated skin and neural model mimic tactile sai afferent spiking response to ramp and hold stimuli The next generation of prosthetic limbs will restore sensory feedback to the nervous system by mimicking how skin mechanoreceptors, innervated by afferents, produce trains of action potentials in response to compressive stimuli.

Prior work has addressed building sensors within skin substitutes for robotics, modeling skin mechanics and neural dynamics of mechanotransduction, and predicting response timing of action potentials for vibration. The effort here is unique because it accounts for skin elasticity by measuring force within simulated skin, utilizes few free model parameters for parsimony, and separates parameter fitting and model validation.

Additionally, the ramp-and-hold, sustained stimuli used in this work capture the essential features of the everyday task of contacting and holding an object. Brain wave-reading robot might help stroke patients. From left, Gerard Francisco, José Luis Contreras-Vidal and Marcia O’Malley work with a University of Houston (UH) graduate student testing MAHI-EXO II, a robotic rehabilitation device developed at Rice and being used at TIRR Memorial Hermann to help spinal-cord-injury patients recover.

In a new project, a similar device will be matched with a noninvasive neural interface under development at UH to help rehabilitate stroke survivors. (Credit: Bruce French/TIRR Memorial Hermann) New research by Rice University, the University of Houston (UH) and TIRR Memorial Hermann aims to help victims recover from stroke to the fullest extent possible by developing and validating a noninvasive brain-machine interface (BMI) to a robotic orthotic device that is expected to innovate upper-limb rehabilitation. The new neurotechnology will interpret brain waves that let a stroke patient willingly operate an exoskeleton that wraps around the arm from the fingertips to the elbow. Can Billionaires Achieve Immortality by 2045? What's the Latest Development? Russian entrepreneur Dmitry Itskov is courting the world's richest individuals to help him in conquering death. Paralysed woman drinks coffee with thought-guided robot arm. Voicegrams transform brain activity into words.

Electronic Tattoo-Like Devices Monitor Brain, Heart and Muscles. We might one day be able to monitor our bodies' internal functions — and prevent things like epileptic seizures before they happen — using a flexible circuit attached to the surface of skin.

The National Science Foundation announced Monday that researchers are working on a prototype tattoo-like device that can detect heart, muscle and brain activity. Tiny curly wires in a flexible membrane make up these devices and work better than conventional hard, brittle circuits, because body tissue itself is soft and pliable. "We're trying to bridge that gap, from silicon, wafer-based electronics to biological, 'tissue-like' electronics, to really blur the distinction between electronics and the body," said materials scientist John Rogers from the University of Illinois Urbana-Champaign.

"As the skin moves and deforms, the circuit can follow those deformations in a completely noninvasive way. " Artificial Super-Skin Could Transform Phones, Robots and Artificial Limbs. Touch sensitivity on gadgets and robots is nothing new.

A few strategically placed sensors under a flexible, synthetic skin and you have pressure sensitivity. Add a capacitive, transparent screen to a device and you have touch sensitivity. However, Stanford University’s new “super skin” is something special: a thin, highly flexible, super-stretchable, nearly transparent skin that can respond to touch and pressure, even when it’s being wrung out like a sponge. The brainchild of Stanford University Associate Professor of chemical engineering Zhenan Bao, this “super skin” employs a transparent film of spray-on, single-walled carbon nanotubes that sit in a thin film of flexible silicon, which is then sandwiched between more silicon. Real-time Discovery Engine - YourVersion: Discover Your Version of the Web™ Real-time Discovery Engine - YourVersion: Discover Your Version of the Web™

Wearable robot puts paralysed legs through their paces. This article was taken from the February 2012 issue of Wired magazine.

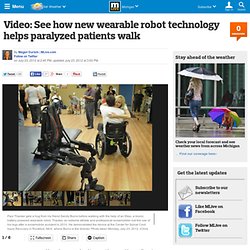

Be the first to read Wired's articles in print before they're posted online, and get your hands on loads of additional content by subscribing online. This is the true integration of man and machine," says Eythor Bender, CEO of Ekso Bionics, a Californian research lab that has developed an intelligent "wearable robot". Bender and his team based the Ekso on a decade of bionics research by the US military. Its motorised leg braces let soldiers carry 90kg loads over long distances by anticipating the wearer's movement and transferring weight to the exoskeleton frame. The same principles allow paraplegics to walk with motorised legs, by responding to gestures made above the waist. Its adjustable titanium frame encases the legs, with straps around the waist, shoulders and thighs, and a computer with two batteries sits as a backpack, powering four electromechanical motors that propel the legs.

Connecting to the brain: Thinking about it. Virtual reality posts on CNET. CES: A laptop that follows your eyes - 1/13. Biggest Scientific Breakthroughs of 2011. Video: See how new wearable robot technology helps paralyzed patients walk. ROCKFORD, MI -- An athlete who was paralyzed in an accident was able to walk again Monday morning during a demonstration of Ekso, a wearable exoskeleton robot, at DMC Rehabilitation Institute of Michigan's Center for Spinal Cord Injury Recovery.

The Ekso technology, named for its exoskeleton-like properties, was developed by California-based Ekso Bionics and aims to help those with lower extremity paralysis or weakness to stand and walk. The wearable bionic robot technology is a battery-powered, bionic device and uses motors to help users walk, said Beverly Millson with Missing Sock Public Relations. The robotic suit weighs about 45 pounds and can be strapped on within three minutes. The patient does not bear the weight of the device as the load is transferred to the ground. The Rehabilitation Institute of Michigan's facility in Detroit purchased one of the robots and funds are being raised for the Rockford location to do the same, said program director Sandy Burns. Dream of making artificial body parts becoming a reality. It sounds like a sci-fi movie – doctors growing body parts to cure our ills.

But thanks to incredible breakthroughs, bionic repairs for humans are fast becoming a reality. Experts yesterday revealed they are perfecting “off the shelf” blood vessels, which could revolutionise treatment of heart attacks and strokes. If the Cambridge University blood vessel team is successful, patients could be spared major operations. The test tube vessels may also treat kidney dialysis patients and repair injuries.

And because the patient’s own skin cells are used, there is less chance of rejection. Professor Jeremy Pearson, of the British Heart Foundation, said: “This is very advanced. Here are other ways science is giving nature a helping hand... Experts are working on a cure for blindness - and have taken huge strides towards their goal.