Untitled. An Introduction to Text Mining using Twitter Streaming API and Python // Adil Moujahid // Data Analytics and more. Text mining is the application of natural language processing techniques and analytical methods to text data in order to derive relevant information.

Text mining is getting a lot attention these last years, due to an exponential increase in digital text data from web pages, google's projects such as google books and google ngram, and social media services such as Twitter. Twitter data constitutes a rich source that can be used for capturing information about any topic imaginable. This data can be used in different use cases such as finding trends related to a specific keyword, measuring brand sentiment, and gathering feedback about new products and services.

Text Mining - RDataMining.com: R and Data Mining. This page shows an example on text mining of Twitter data with R packages twitteR, tm and wordcloud.

Package twitteR provides access to Twitter data, tm provides functions for text mining, and wordcloud visualizes the result with a word cloud.If you have no access to Twitter, the tweets data can be downloaded as file "rdmTweets.RData" at the Data page, and then you can skip the first step below. Retrieving Text from Twitter Twitter API requires authentication since March 2013. Please follow instructions in "Section 3 - Authentication with OAuth" in the twitteR vignettes on CRAN or this link to complete authentication before running the code below.

Transforming Text The tweets are first converted to a data frame and then to a corpus. > df <- do.call("rbind", lapply(rdmTweets, as.data.frame))> dim(df)[1] 79 10> library(tm)> # build a corpus, which is a collection of text documents> # VectorSource specifies that the source is character vectors. > myCorpus <- Corpus(VectorSource(df$text))

1 getting data - Mining Twitter with R. Mining the Social Web, 2E. This chapter kicks off our journey of mining the social web with Twitter, a rich source of social data that is a great starting point for social web mining because of its inherent openness for public consumption, clean and well-documented API, rich developer tooling, and broad appeal to users from every walk of life.

Twitter data is particularly interesting because tweets happen at the "speed of thought" and are available for consumption as they happen in near real time, represent the broadest cross-section of society at an international level, and are so inherently multifaceted. Tweets and Twitter's "following" mechanism link people in a variety of ways, ranging from short (but often meaningful) conversational dialogues to interest graphs that connect people and the things that they care about.

Since this is the first chapter, we'll take our time acclimating to our journey in social web mining. Data, Data, Data: Thousands of Public Data Sources. We love data, big and small and we are always on the lookout for interesting datasets.

Over the last two years, the BigML team has compiled a long list of sources of data that anyone can use. It’s a great list for browsing, importing into our platform, creating new models and just exploring what can be done with different sets of data. In this post, we are sharing this list with you. Why? Well, searching for great datasets can be a time consuming task. Categories of data sources We grouped the links into some categories that bit.ly calls ‘Bundles’ to help you find what you are looking for and bundled the Bundles into a single Data Sources Bundle.

Machine Learning Datasets Although many datasets can be used for machine learning tasks, the sources in this Bundle are specifically pre-processed for machine learning. Machine Learning Challenges Our next bundle of links contains links to Machine Learning Challenges. Kaggle Beginner Competition: Predicting Titanic Survival using Machine Learning. Complete the analysis of who was likely to survive, using the tools of machine learning.

Such competition are great starting place for people who don't have a lot of experience in data science and machine learning The wreck of the RMS Titanic is one of the most infamous shipwreaks in history. On April 15, 1912, during her maiden voyage, the Titanic sank after colliding with an iceberg, killing 1502 out of 2224 passengers and crew. This sensational tragedy shocked the international community and lead to better safety regulations for ships.

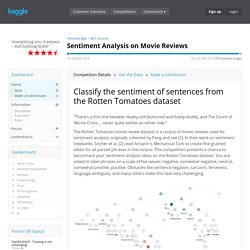

One of the reasons that the shipwreck lead to such loss of life was that there were not enough lifeboats for the passengers and crew. In this contest, we ask you to complete the analysis of what sorts of people were likely to survive. Sentiment Analysis on Movie Reviews. "There's a thin line between likably old-fashioned and fuddy-duddy, and The Count of Monte Cristo ... never quite settles on either side.

" The Rotten Tomatoes movie review dataset is a corpus of movie reviews used for sentiment analysis, originally collected by Pang and Lee [1]. In their work on sentiment treebanks, Socher et al. [2] used Amazon's Mechanical Turk to create fine-grained labels for all parsed phrases in the corpus. This competition presents a chance to benchmark your sentiment-analysis ideas on the Rotten Tomatoes dataset. You are asked to label phrases on a scale of five values: negative, somewhat negative, neutral, somewhat positive, positive. Where to start with Data Mining and Data Science.

Gregory Piatetsky answer: