405 Method Not Allowed. 3D Stereoscopic Photography: Depth Map Automatic Generator 5 (DMAG5)

CFDG Gallery - CFDG Gallery. Computer Vision Group @ KTH. Www-cvr.ai.uiuc. Yasutaka Furukawa and Jean Ponce Beckman Institute and Department of Computer Science University of Illinois at Urbana-Champaign The following are multiview stereo data sets captured in our lab: a set of images, camera parameters and extracted apparent contours of a single rigid object.

Each data set consists of 24 images. Image resolutions range from 1400x1300 pixels^2 to 2000x1800 pixels^2 depending on the data set. For calibration, we have used "Camera Calibration Toolbox for Matlab" by Jean-Yves Bouguet to estimate both the intrinsic and the extrinsic camera parameters. Images are provided in the JPEG format. Note: we also provide visual hull data sets. We captured the above data sets in our lab by using 3 fixed cameras (Canon EOS 1D Mark II) and a motorized turn table (please see a picture below). ATC shopping center tracking dataset. As part of our project on enabling mobile social robots to work in public spaces (project homepage in Japanese, founded by JST/CREST) we set up a tracking environment in the "ATC" shopping center in Osaka, Japan.

The system consists of multiple 3D range sensors, covering an area of about 900 m2. You can find more details about the system in the reference paper below. Below is a video showing the tracking system in action: The data was collected twice a week for one full year. Here we provide sample datasets of both the tracking result as well as raw sensor measurements. Tracking result This is a sample result of the pedestrian tracking, from November 14, 2012, 9:40-20:20. Scene Reconstruction from High Spatio-Angular Resolution Light Fields. Project Members.

TinyGL : a Small, Free and Fast Subset of OpenGL* News (Mar 17 2002) TinyGL 0.4 is out (Changelog) Download Get it: TinyGL-0.4.tar.gz.

3D Object Manipulation in a Single Photograph using Stock 3D Models. DEPTHY the Lens Blur viewer. GameFace Labs Has First VR Headset with a 2.5K Display—And It's Mobile. Why xkcd-style graphs are important. For most data scientists, a toolkit like scipy/matplotlib or R becomes so familiar that it becomes almost an extension of their own mind.

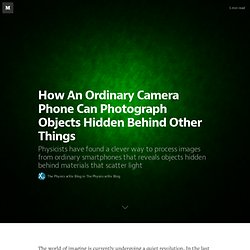

When fleshing out an idea, we will just open up ipython, write a few lines of code, and display the plot on the screen to get a feel for the general idea. One very nice thing about such toolkits is that although the code may be short and simple, the plots are beautiful and precise. The latter can be a problem when communicating with others (particularly non-technical others), however. Treevis.net. Kolektif 'ağ haritalama' platformu ve ilişkiler veri tabanı - Graph Commons. How An Ordinary Camera Phone Can Photograph Objects Hidden Behind Other Things — The Physics arXiv Blog. The world of imaging is currently undergoing a quiet revolution.

In the last few years, physicists have worked out how to take high resolution images of ordinary scenes using just a single pixel. Yep, that’s just one pixel. Indeed, the same single-pixel technique can produce 3D images and even 3D movies. If that sounds extraordinary, it’s just the start. This technique requires no lens. What is needed, though, is lots of images, which must be processed using a powerful computer to reproduce the original scene. The Littlest CPU Rasterizer. Okay, so I’ve been declaring for a long time that I’m going to blog about some of the stuff I’ve developed for my personal project, but I never seem to get around to it.

Finally I thought I’d just start off with a cute little trick: here’s how I’m doing “image-based” occlusion for my sky light. A little while ago, while I was thinking about ambient lighting, I realized I actually had a simple enough problem that I could render very small cubemaps, on the CPU, in vast numbers. Here’s the basic underlying idea: A 16-by-16 black-and-white image is 256 bits, or 32 bytes.

Rendering large terrains. Today we’ll look at how to efficiently render a large terrain in 3D.

We’ll be using WebGL to do this, but the techniques can be applied pretty much anywhere. We’ll concentrate on the vertex shader, that is, how best to use the position the vertices of our terrain mesh, so that it looks good up close as well as far away. To see how this end result looks, check out the live demo. The demo was built using THREE.js, and the code is on github, if you’re interested in the details. An important concept when rendering terrain is the “level of detail”. SimpleCV. BioShock Infinite Lighting. Structure Sensor: Capture the World in 3D by Occipital. The Structure Sensor gives mobile devices the ability to capture and understand the world in three dimensions.

With the Structure Sensor attached to your mobile device, you can walk around the world and instantly capture it in a digital form. This means you can capture 3D maps of indoor spaces and have every measurement in your pocket. You can instantly capture 3D models of objects and people for import into CAD and for 3D printing. You can play mind blowing augmented reality games where the real world is your game world. Walt Disney Animation Studios. Gosu-opengl-tutorials/using_nehe_lessons/lesson05.rb at master · tjbladez/gosu-opengl-tutorials. Topdraw - An algorithmic image generation application. Universal Scene Description. Scene Reconstruction from High Spatio-Angular Resolution Light Fields.