Order, rhythm and pattern. This is not about how to count ants but how ants count.

The post follows research by Zhanna Reznikova and Boris Ryabko that investigates the numerical capacities of ants using ideas from Information Theory such as Shannon entropy and Kolmogorov complexity. (Reznikova & Ryabko 2012) Ants Some species of red wood ant, that live in colonies of approximately 800 – 2000 individuals, have a highly specialised social structure that includes having stable foraging teams of 5 to 9 ants. These teams are lead by a scout ant whose function is to find food sources, the location of which is then communicated to the other members of the foraging team. Dr. Susan Blackmore. Page. Kristjankorjus/Replicating-DeepMind · GitHub.

How consciousness works – Michael Graziano. Scientific talks can get a little dry, so I try to mix it up.

I take out my giant hairy orangutan puppet, do some ventriloquism and quickly become entangled in an argument. I’ll be explaining my theory about how the brain — a biological machine — generates consciousness. Kevin, the orangutan, starts heckling me. ‘Yeah, well, I don’t have a brain. But I’m still conscious. Kevin is the perfect introduction. Many thinkers have approached consciousness from a first-person vantage point, the kind of philosophical perspective according to which other people’s minds seem essentially unknowable. Lately, the problem of consciousness has begun to catch on in neuroscience. I believe that the easy and the hard problems have gotten switched around. In a period of rapid evolutionary expansion called the Cambrian Explosion, animal nervous systems acquired the ability to boost the most urgent incoming signals.

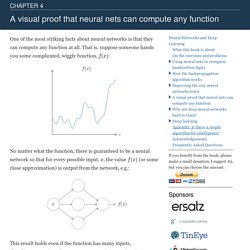

Attention requires control. I call this the ‘attention schema theory’. Neural networks and deep learning. One of the most striking facts about neural networks is that they can compute any function at all.

That is, suppose someone hands you some complicated, wiggly function, f(x): No matter what the function, there is guaranteed to be a neural network so that for every possible input, x, the value f(x) (or some close approximation) is output from the network, e.g. SHRDLU resurrection. This page was created in 2002 and last updated 2013 August 22 Broken links are marked with [brackets].

Network of 75 Million Neurons of the Mouse Brain Mapped for the First Time. Network of 75 Million Neurons of the Mouse Brain Mapped for the First Time With improved visualization tools and souped-up computers to crunch the massive numbers involved in studying the 100 billion neurons in the human brain, many researchers, including the U.S. government, are trying to map the brain’s wiring, or “connectome.”

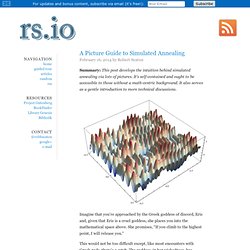

Some researchers have dived right in to studying the massive human brain, but others see the mouse brain, with just 75 million neurons, as a more logical place to start. A Picture Guide to Simulated Annealing. Summary: This post develops the intuition behind simulated annealing via lots of pictures.

It's self-contained and ought to be accessible to those without a math-centric background. It also serves as a gentle introduction to more technical discussions. Imagine that you’re approached by the Greek goddess of discord, Eris and, given that Eris is a cruel goddess, she places you into the mathematical space above. She promises, “If you climb to the highest point, I will release you.” 7. About Torch7 Torch7 is a scientific computing framework with wide support for machine learning algorithms.

It is easy to use and provides a very efficient implementation, thanks to an easy and fast scripting language, LuaJIT, and an underlying C implementation. Among other things, it provides: a powerful N-dimensional array lots of routines for indexing, slicing, transposing, ... Amazing interface to C, via LuaJIT linear algebra routines neural network, and energy-based models numeric optimization routines ... Clément Farabet. Github Follow me on Github: here and on the Torch portal.

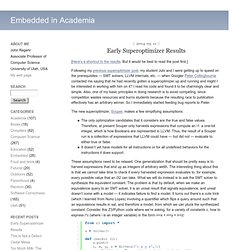

I host all my projects there. Torch7 Torch7 provides a Matlab-like environment for state-of-the-art machine learning algorithms. It is easy to use and provides a very efficient implementation, thanks to an easy and fast scripting language (Lua / LuaJIT) and an underlying C/OpenMP/CUDA implementation. Early Superoptimizer Results. [Here's a shortcut to the results.

But it would be best to read the post first.] Following my previous superoptimizer post, my student Jubi and I were getting up to speed on the prerequisites — SMT solvers, LLVM internals, etc. — when Googler Peter Collingbourne contacted me saying that he had recently gotten a superoptimizer up and running and might I be interested in working with him on it? I read his code and found it to be charmingly clear and simple. The Trick That Makes Google's Self-Driving Cars Work - Alexis C. Madrigal. Google is engaging in unprecedented, massive, ongoing data collection to transform intractable problems into solvable chores.

A snapshot of how a Google car sees the world around it. (Alexis Madrigal) Stanford Bioengineers Develop 'Neurocore' Chips 9,000 Times Faster Than a PC.

Deniz Yuret's Homepage: Machine learning in 10 pictures. I find myself coming back to the same few pictures when explaining basic machine learning concepts. Below is a list I find most illuminating. 1. Test and training error: Why lower training error is not always a good thing: ESL Figure 2.11. Steve's Machine Learning Blog: Top Ten Reason To "Kaggle" Do you aspire to do Machine Learning, Data Science, or Big Data Analytics? Sign up. DataTau.