pierre-michel Goupil

Conversations with Robots: Voice, Smart Agents & the Case for Structured Content. Peinture. Azure. Blockchain. eBook. A lire. Architecture. Documentation projet. Conversation UI. A Hitchhiker’s Guide to giving a Mini-talk in Maths (or another technical subject). There are excellent in-depth sources about giving talks.

This small guide is focuses on the so-called mini-talks. No one likes to sit through a bad talk, but unfortunately everyone does it much too frequently. No one sets out to give a bad talk, but probably all of us have done so. (Bryna Kra. Giving a Talk. A great talk requires a lot of experience but it is a skill that can be learned. Mini-talks Mini-talks are very short talks. Choose topic you find interesting and exciting Exciting your audience is the easiest if you are excited yourself.

Focus on one single point and make it dead clear. API University. API - Open Food Facts EN. Introduction Current status of the API Getting help with the API You can ask for help on using the API in this API channel on Slack.

Join us to discuss your use case and get help/code from other devs. How to test the API.

UX. Color In Design: Influence On Users' Actions. Every single day we’re surrounded by various colors from everywhere.

If you take a closer look at the things around, they may surprise you with a number of colors and shades. People may not notice how colorful everyday things are but the colors have the significant impact on our behavior and emotions. Today our article is devoted to the science studying this issue called color psychology. Let’s define the meaning of the colors and review some tips on choosing suitable colors for the design. What is color psychology? It’s a branch of psychology studying the influence of colors on human mood and behavior. The success of the product depends largely upon the colors chosen for the design. Meaning of colors To convey the right tone, message and call users to make the expected action, designers need to understand what colors mean and what reaction they evoke.

Red The color usually associates with passionate, strong, or aggressive feelings. Toonie Alarm app tutorial Orange. Data Mining Concepts, Models, Methods, and Algorithms CHM Download Free. A comprehensive introduction to the exploding field of data miningWe are surrounded by data, numerical and otherwise, which must be analyzed and processed to convert it into information that informs, instructs, answers, or otherwise aids understanding and decision-making.

Due to the ever-increasing complexity and size of today's data sets, a new term, data mining, was created to describe the indirect, automatic data analysis techniques that utilize more complex and sophisticated tools than those which analysts used in the past to do mere data analysis.Data Mining: Concepts, Models, Methods, and Algorithms discusses data mining principles and then describes representative state-of-the-art methods and algorithms originating from different disciplines such as statistics, machine learning, neural networks, fuzzy logic, and evolutionary computation.

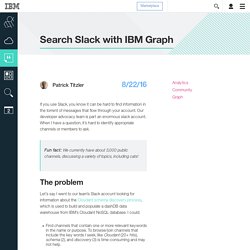

Search Slack with IBM Graph - Cloud Data Services. If you use Slack, you know it can be hard to find information in the torrent of messages that flow through your account.

Our developer advocacy team is part an enormous slack account. Les meilleurs logiciels gratuits pour nettoyer votre PC. En poursuivant votre navigation sur ce site, vous acceptez nos CGU et l'utilisation de cookies afin de réaliser des statistiques d'audiences et vous proposer une navigation optimale, la possibilité de partager des contenus sur des réseaux sociaux ainsi que des services et offres adaptés à vos centres d'intérêts.

Pour en savoir plus et paramétrer les cookies... Tiny Weather - CodeProject. Introduction Tiny Weather uses the services of Weather Underground and Freegeoip.net, this application only grabs the current weather conditions, but with Weather Underground, you can get a lot more.

We get the weather data in JSON format so we need to use the NewtonSoft.json.dll to parse the data (link below). Getting your location can be as easy as typing it in manually or we can geolocate our location by the freegeoip.net service using your IP address, this data is also in the JSON format.

#Test du thermostat intelligent Nest 3ème génération. Lorsqu’on réfléchi à améliorer son système de chauffage, soit pour faire des économies, soit pour pouvoir le piloter à distance via un smarthpone, voir les deux, nos recherches nous amènent forcément au thermostat intelligent Nest.

En cinq ans il s’en est vendu plusieurs millions à travers le monde, et même si il y a de plus en plus de concurrence, le thermostat Nest reste loin devant. Un petit historique pour commencer Le premier prototype du thermostat Nest a été fabriqué en Décembre 2010, mais il faudra attendre Octobre 2011 pour qu’il soit commercialisé. A l’époque c’était une petite révolution car on était au tout début des objets connectés, et sa fonction d’auto-apprentissage a beaucoup impressionné. Un an plus tard, la seconde génération voit le jour.

Supervision. NoSQL. Rss. Météo. Mobile. Machine Learning. Développement WEB. IA. Formation. Domotique. Auto. How to Design an Alexa Handsfree Messenger Skill. Learn how to create a Handsfree Messenger Skill in this tutorial by Madhur Bhargava, a specialist in various mobile/embedded technologies.

Overview of the Handsfree Messenger Skill This article will teach you an Alexa skill, which you can call Handsfree Messenger, and it will allow the user to leverage Alexa to send SMSes in a hands-free manner, just using Alexa and Echo, without any interaction with his/her phone. You’ll use a custom service called Twilio, which allows a user/developer to make/receive phone calls programmatically and send/receive text messages using web service APIs, in addition to AWS, where you will be hosting your Lambda. This is how the Handsfree Messenger skill will work: 1. 2. 3. 4. 5. 6.