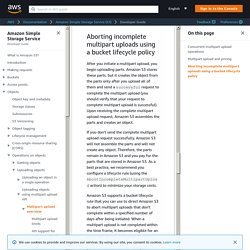

Untitled. The Multipart upload API enables you to upload large objects in parts.

You can use this API to upload new large objects or make a copy of an existing object (see Operations on objects). Multipart uploading is a three-step process: You initiate the upload, you upload the object parts, and after you have uploaded all the parts, you complete the multipart upload. Upon receiving the complete multipart upload request, Amazon S3 constructs the object from the uploaded parts, and you can then access the object just as you would any other object in your bucket. You can list all of your in-progress multipart uploads or get a list of the parts that you have uploaded for a specific multipart upload. Each of these operations is explained in this section. Untitled. Untitled. Untitled. Undefined. Every interaction with Amazon S3 is either authenticated or anonymous.

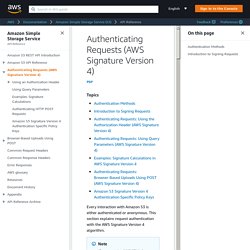

This section explains request authentication with the AWS Signature Version 4 algorithm. If you use the AWS SDKs (see Sample Code and Libraries) to send your requests, you don't need to read this section because the SDK clients authenticate your requests by using access keys that you provide. Unless you have a good reason not to, you should always use the AWS SDKs. In Regions that support both signature versions, you can request AWS SDKs to use specific signature version. For more information, see Specifying Signature Version in Request Authentication in the Amazon Simple Storage Service Developer Guide. Authentication with AWS Signature Version 4 provides some or all of the following, depending on how you choose to sign your request: Verification of the identity of the requester – Authenticated requests require a signature that you create by using your access keys (access key ID, secret access key).

Untitled. The request authentication discussed in this section is based on AWS Signature Version 2, a protocol for authenticating inbound API requests to AWS services.

Amazon S3 now supports Signature Version 4, a protocol for authenticating inbound API requests to AWS services, in all AWS regions. At this time, AWS regions created before January 30, 2014 will continue to support the previous protocol, Signature Version 2. Any new regions after January 30, 2014 will support only Signature Version 4 and therefore all requests to those regions must be made with Signature Version 4. For more information, see Examples: Browser-Based Upload using HTTP POST (Using AWS Signature Version 4) in the Amazon Simple Storage Service API Reference. File upload This example shows the complete process for constructing a policy and form that can be used to upload a file attachment.

Policy and form construction The following policy supports uploads to Amazon S3 for the awsexamplebucket1 bucket. <html><head> ... JSZip. Untitled. Untitled. Untitled. Uploading files to AWS S3 directly from browser not only improves the performance but also provides less overhead for your servers.

Untitled. Well if your application is uploading a file to your server, and then your server uploads it to an AWS S3 Bucket, you have a bottleneck and performance trouble.

My clients were uploading large video files, 100mb average, from various locations Asia, Europe, and North America, my server is hosted on Heroku and located in Northern Virginia but my main S3 Bucket is in Ireland! Will be easier and efficient if the web client has the possibility to upload directly to that AWS S3 Bucket. Seem’s trivial but you may confront several problems and the official AWS documentation don’t tell you much. You will need to generate pre-signed AWS S3 URLs, so a user can write an object directly with a POST or PUT call. Presigned URL (HTTP PUT Method) A pre-signed URL is a URL that you generate with your AWS credentials and you provide to your users to grant temporary access to a specific AWS S3 object. The presigned URLs are valid only for the specified duration.

Alternative (HTTP POST Form Method) Scale Indefinitely on S3 With These Secrets of the S3 Masters. In a great article, Amazon S3 Performance Tips & Tricks, Doug Grismore, Director of Storage Operations for AWS, has outed the secret arcana normally reserved for Premium Developer Support customers on how to really use S3: Size matters.

Workloads with less than 50-100 total requests per second don't require any special effort. Customers that routinely perform thousands of requests per second need a plan.Automated partitioning. Automated systems scale S3 horizontally by continuously splitting data into partitions based on high request rates and the number of keys in a partition (which leads to slow lookups). Lessons you've learned with sharding may also apply to S3. A List of Amazon S3 Backup Tools. In an effort to replace my home backup server with Amazon's S3, I've been collecting a list of Amazon S3 compatible backup tools to look at.

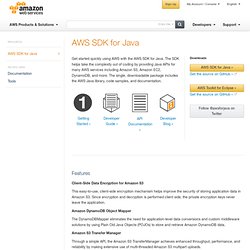

Here's what I've discovered, followed by my requirements. The List I've evaluated exactly zero of these so far. That's next. s3sync.rb is written in Ruby as a sort of rsync clone to replace the perl script s3sync which is now abandonware. For those keeping track, non-S3 options suggested in the comment on my previous post are Carbonite, rsync.net, and a DreamHost account. AWS SDK for Java - A Java Library for Amazon S3, Amazon EC2, and more. Client-Side Data Encryption for Amazon S3 This easy-to-use, client-side encryption mechanism helps improve the security of storing application data in Amazon S3.

Since encryption and decryption is performed client side, the private encryption keys never leave the application. Amazon DynamoDB Object Mapper The DynamoDBMapper eliminates the need for application-level data conversions and custom middleware solutions by using Plain Old Java Objects (POJOs) to store and retrieve Amazon DynamoDB data. Amazon S3 tools: About S3tools project.

S3cmd : Command Line S3 Client and Backup for Linux and Mac Amazon S3 is a reasonably priced data storage service.

Ideal for off-site file backups, file archiving, web hosting and other data storage needs. It is generally more reliable than your regular web hosting for storing your files and images. Check out about Amazon S3 to find out more. S3cmd is a free command line tool and client for uploading, retrieving and managing data in Amazon S3 and other cloud storage service providers that use the S3 protocol, such as Google Cloud Storage or DreamHost DreamObjects. S3cmd is written in Python. Lots of features and options have been added to S3cmd, since its very first release in 2008.... we recently counted more than 60 command line options, including multipart uploads, encryption, incremental backup, s3 sync, ACL and Metadata management, S3 bucket size, bucket policies, and more!

S3cmd System Requirements: S3cmd requires Python 2.6 or newer.